The last few years have seen rapid development in the field of deep learning. Although hardware has improved, such as with the latest generation of accelerators from NVIDIA and Amazon, advanced machine learning (ML) practitioners still regularly encounter issues deploying their large deep learning models for applications such as natural language processing (NLP).

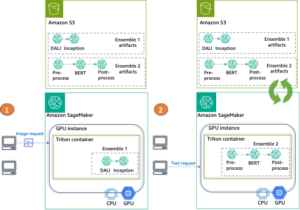

In an earlier post, we discussed capabilities and configurable settings in Amazon SageMaker model deployment that can make inference with these large models easier. Today, we announce a new Amazon SageMaker Deep Learning Container (DLC) that you can use to get started with large model inference in a matter of minutes. This DLC packages some of the most popular open-source libraries for model parallel inference, such as DeepSpeed and Hugging Face Accelerate.

In this post, we use a new SageMaker large model inference DLC to deploy two of the most popular large NLP models: BigScience’s BLOOM-176B in Meta OPT-30B from the Hugging Face repository. In particular, we use Deep Java Library (DJL) serving and tensor parallelism techniques from DeepSpeed to achieve 0.1 second latency per token in a text generation use case.

You can find our complete example notebooks in our GitHub repozitorij.

Large model inference techniques

Language models have recently exploded in both size and popularity. With easy access from model zoos such as Hugging Face and improved accuracy and performance in NLP tasks such as classification and text generation, practitioners are increasingly reaching for these large models. However, large models are often too big to fit within the memory of a single accelerator. For example, the BLOOM-176B model can require more than 350 gigabytes of accelerator memory, which far exceeds the capacity of hardware accelerators available today. This necessitates the use of model parallel techniques from libraries like DeepSpeed and Hugging Face Accelerate to distribute a model across multiple accelerators for inference. In this post, we use the SageMaker large model inference container to generate and compare latency and throughput performance using these two open-source libraries.

DeepSpeed and Accelerate use different techniques to optimize large language models for inference. The key difference is DeepSpeed’s use of optimized kernels. These kernels can dramatically improve inference latency by reducing bottlenecks in the computation graph of the model. Optimized kernels can be difficult to develop and are typically specific to a particular model architecture; DeepSpeed supports popular large models such as OPT and BLOOM with these optimized kernels. In contrast, Hugging Face’s Accelerate library doesn’t include optimized kernels at the time of writing. As we discuss in our results section, this difference is responsible for much of the performance edge that DeepSpeed has over Accelerate.

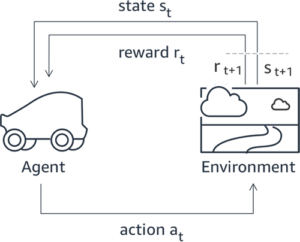

A second difference between DeepSpeed and Accelerate is the type of model parallelism. Accelerate uses pipeline parallelism to partition a model between the hidden layers of a model, whereas DeepSpeed uses tensor parallelism to partition the layers themselves. Pipeline parallelism is a flexible approach that supports more model types and can improve throughput when larger batch sizes are used. Tensor parallelism requires more communication between GPUs because model layers can be spread across multiple devices, but can improve inference latency by engaging multiple GPUs simultaneously. You can learn more about parallelism techniques in Introduction to Model Parallelism in Paralelizem modela.

Pregled rešitev

To effectively host large language models, we need features and support in the following key areas:

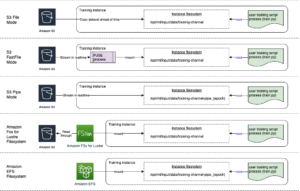

- Building and testing solutions – Given the iterative nature of ML development, we need the ability to build, rapidly iterate, and test how the inference endpoint will behave when these models are hosted, including the ability to fail fast. These models can typically be hosted only on larger instances like p4dn or g5, and given the size of the models, it can take a while to spin up an inference instance and run any test iteration. Local testing usually has constraints because you need a similar instance in size to test, and these models aren’t easy to obtain.

- Deploying and running at scale – The model files need to be loaded onto the inference instances, which presents a challenge in itself given the size. Tar / Un-Tar as an example for the Bloom-176B takes about 1 hour to create and another hour to load. We need an alternate mechanism to allow easy access to the model files.

- Loading the model as singleton – For a multi-worker process, we need to ensure the model gets loaded only once so we don’t run into race conditions and further spend unnecessary resources. In this post, we show a way to load directly from Preprosta storitev shranjevanja Amazon (Amazon S3). However, this only works if we use the default settings of the DJL. Furthermore, any scaling of the endpoints needs to be able to spin up in a few minutes, which calls for reconsidering how the models might be loaded and distributed.

- Sharding frameworks – These models typically need to be , usually by a tensor parallelism mechanism or by pipeline sharding as the typical sharding techniques, and we have advanced concepts like ZeRO sharding built on top of tensor sharding. For more information about sharding techniques, refer to Paralelizem modela. To achieve this, we can have various combinations and use frameworks from NIVIDIA, DeepSpeed, and others. This needs the ability to test BYOC or use 1P containers and iterate over solutions and run benchmarking tests. You might also want to test various hosting options like asynchronous, serverless, and others.

- Hardware selection – Your choice in hardware is determined by all the aforementioned points and further traffic patterns, use case needs, and model sizes.

In this post, we use DeepSpeed’s optimized kernels and tensor parallelism techniques to host BLOOM-176B and OPT-30B on SageMaker. We also compare results from Accelerate to demonstrate the performance benefits of optimized kernels and tensor parallelism. For more information on DeepSpeed and Accelerate, refer to DeepSpeed Inference: Enabling Efficient Inference of Transformer Models at Unprecedented Scale in Incredibly Fast BLOOM Inference with DeepSpeed and Accelerate.

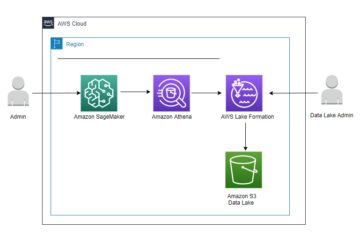

We use DJLServing as the model serving solution in this example. DJLServing is a high-performance universal model serving solution powered by the Deep Java Library (DJL) that is programming language agnostic. To learn more about the DJL and DJLServing, refer to Razmestite velike modele v Amazon SageMaker z vzporednim sklepanjem modelov DJLServing in DeepSpeed.

It’s worth noting that optimized kernels can result in precision changes and a modified computation graph, which could theoretically result in changed model behavior. Although this could occasionally change the inference outcome, we do not expect these differences to materially impact the basic evaluation metrics of a model. Nevertheless, practitioners are advised to confirm the model outputs are as expected when using these kernels.

The following steps demonstrate how to deploy a BLOOM-176B model in SageMaker using DJLServing and a SageMaker large model inference container. The complete example is also available in our GitHub repozitorij.

Using the DJLServing SageMaker DLC image

Use the following code to use the DJLServing SageMaker DLC image after replacing the region with your specific region you are running the notebook in:

Create our model file

Najprej ustvarimo datoteko z imenom serving.properties that contains only one line of code. This tells the DJL model server to use the DeepSpeed engine. The file contains the following code:

serving.properties is a file defined by DJLServing that is used to configure per-model configuration.

Nato ustvarimo svoje model.py file, which defines the code needed to load and then serve the model. In our code, we read in the TENSOR_PARALLEL_DEGREE environment variable (the default value is 1). This sets the number of devices over which the tensor parallel modules are distributed. Note that DeepSpeed provides a few built-in partition definitions, including one for BLOOM models. We use it by specifying replace_method in relpace_with_kernel_inject. If you have a customized model and need DeepSpeed to partition effectively, you need to change relpace_with_kernel_inject do false in dodaj injection_policy to make the runtime partition work. For more information, refer to Initializing for Inference. For our example, we used the pre-partitioned BLOOM model on DeepSpeed.

Secondly, in the model.py file, we also load the model from Amazon S3 after the endpoint has been spun up. The model is loaded into the /tmp space on the container because SageMaker maps the /tmp k Trgovina z elastičnimi bloki Amazon (Amazon EBS) volume that is mounted when we specify the endpoint creation parameter VolumeSizeInGB. For instances like p4dn, which come pre-built with the volume instance, we can continue to leverage the /tmp on the container. See the following code:

DJLServing manages the runtime installation on any pip packages defined in requirement.txt. This file will have:

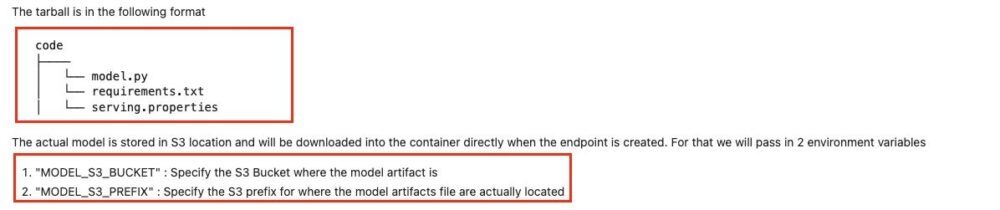

We have created a directory called code in model.py, serving.propertiesin requirements.txt files are already created in this directory. To view the files, you can run the following code from the terminal:

The following figure shows the structure of the model.tar.gz.

Lastly, we create the model file and upload it to Amazon S3:

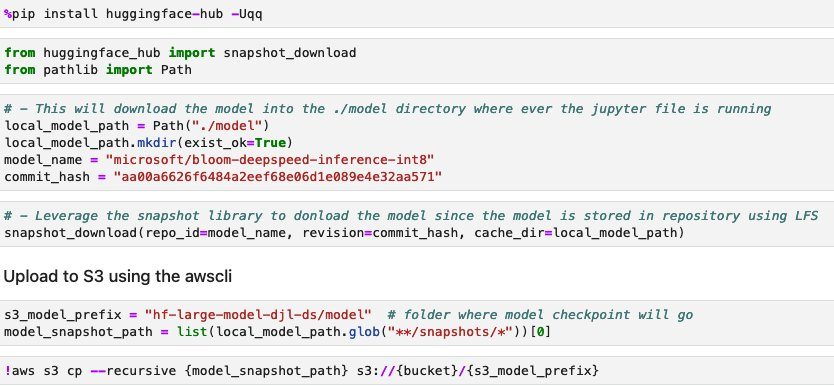

Download and store the model from Hugging Face (Optional)

We have provided the steps in this section in case you want to download the model to Amazon S3 and use it from there. The steps are provided in the Jupyter file on GitHub. The following screenshot shows a snapshot of the steps.

Ustvarite model SageMaker

Zdaj ustvarjamo a Model SageMaker. Uporabljamo Registar elastičnih zabojnikov Amazon Slika (Amazon ECR) in artefakt modela iz prejšnjega koraka za ustvarjanje modela SageMaker. V nastavitvi modela konfiguriramo TENSOR_PARALLEL_DEGREE=8, kar pomeni, da je model razdeljen na 8 GPE. Oglejte si naslednjo kodo:

After you run the preceding cell in the Jupyter file, you see output similar to the following:

Ustvarite končno točko SageMaker

You can use any instances with multiple GPUs for testing. In this demo, we use a p4d.24xlarge instance. In the following code, note how we set the ModelDataDownloadTimeoutInSeconds, ContainerStartupHealthCheckTimeoutInSecondsin VolumeSizeInGB parameters to accommodate the large model size. The VolumeSizeInGB parameter velja za primerke GPE, ki podpirajo priponko nosilca EBS.

Nazadnje ustvarimo končno točko SageMaker:

You see it printed out in the following code:

Starting the endpoint might take a while. You can try a few more times if you run into the InsufficientInstanceCapacity napako ali pa pri AWS vložite zahtevo za povečanje omejitve v vašem računu.

Nastavitev zmogljivosti

Če nameravate to objavo in spremljajoči prenosni računalnik uporabiti z drugim modelom, boste morda želeli raziskati nekatere nastavljive parametre, ki jih ponujajo SageMaker, DeepSpeed in DJL. Ponavljajoče eksperimentiranje s temi parametri lahko pomembno vpliva na zakasnitev, prepustnost in stroške vašega gostujočega velikega modela. Če želite izvedeti več o nastavitvah parametrov, kot so število delavcev, stopnja vzporednosti tenzorjev, velikost čakalne vrste opravil in drugi, glejte DJL Serving configurations in Razmestite velike modele v Amazon SageMaker z vzporednim sklepanjem modelov DJLServing in DeepSpeed.

Rezultati

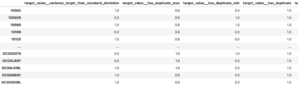

In this post, we used DeepSpeed to host BLOOM-176B and OPT-30B on SageMaker ML instances. The following table summarizes our performance results, including a comparison with Hugging Face’s Accelerate. Latency reflects the number of milliseconds it takes to produce a 256-token string four times (batch_size=4) from the model. Throughput reflects the number of tokens produced per second for each test. For Hugging Face Accelerate, we used the library’s default loading with GPU memory mapping. For DeepSpeed, we used its faster checkpoint loading mechanism.

| Model | Knjižnica | Model Precision | Velikost serije | Parallel Degree | Primerek | Time to Load (I) |

Latency (4 x 256 Token Output) | . | ||

| . | . | . | . | . | . | . | P50 (gospa) |

P90 (gospa) |

P99 (gospa) |

Pretočnost (tokens/sec) |

| BLOOM-176B | DeepSpeed | INT8 | 4 | 8 | p4d.24xvelika | 74.9 | 27,564 | 27,580 | 32,179 | 37.1 |

| BLOOM-176B | Pospešite | INT8 | 4 | 8 | p4d.24xvelika | 669.4 | 92,694 | 92,735 | 103,292 | 11.0 |

| OPT-30B | DeepSpeed | FP16 | 4 | 4 | g5.24xlarge | 239.4 | 11,299 | 11,302 | 11,576 | 90.6 |

| OPT-30B | Pospešite | FP16 | 4 | 4 | g5.24xlarge | 533.8 | 63,734 | 63,737 | 67,605 | 16.1 |

From a latency perspective, DeepSpeed is about 3.4 times faster for BLOOM-176B and 5.6 times faster for OPT-30B than Accelerate. DeepSpeed’s optimized kernels are responsible for much of this difference in latency. Given these results, we recommend using DeepSpeed over Accelerate if your model of choice is supported.

It’s also worth noting that model loading times with DeepSpeed were much shorter, making it a better option if you anticipate needing to quickly scale up your number of endpoints. Accelerate’s more flexible pipeline parallelism technique may be a better option if you have models or model precisions that aren’t supported by DeepSpeed.

These results also demonstrate the difference in latency and throughput of different model sizes. In our tests, OPT-30B generates 2.4 times the number of tokens per unit time than BLOOM-176B on an instance type that is more than three times cheaper. On a price per unit throughput basis, OPT-30B on a g5.24xl instance is 8.9 times better than BLOOM-176B on a p4d.24xl instance. If you have strict latency, throughput, or cost limitations, consider using the smallest model possible that will still achieve functional requirements.

Čiščenje

As part of best practices it is always recommended to delete idle instances. The below code shows you how to delete the instances.

Optionally delete the model check point from your S3

zaključek

In this post, we demonstrated how to use SageMaker large model inference containers to host two large language models, BLOOM-176B and OPT-30B. We used DeepSpeed’s model parallel techniques with multiple GPUs on a single SageMaker ML instance.

For more details about Amazon SageMaker and its large model inference capabilities, refer to Amazon SageMaker now supports deploying large models through configurable volume size and timeout quotas in Sklepanje v realnem času.

O avtorjih

Simon Zamarin je arhitekt rešitev AI / ML, katerega glavni poudarek je pomagati strankam izvleči vrednost iz njihovih podatkovnih sredstev. V prostem času Simon rad preživlja čas z družino, bere znanstvene fantastike in dela na različnih hišnih projektih.

Simon Zamarin je arhitekt rešitev AI / ML, katerega glavni poudarek je pomagati strankam izvleči vrednost iz njihovih podatkovnih sredstev. V prostem času Simon rad preživlja čas z družino, bere znanstvene fantastike in dela na različnih hišnih projektih.

Rupinder Grewal je Sr Ai/ML Specialist Solutions Architect pri AWS. Trenutno se osredotoča na streženje modelov in MLO na SageMakerju. Pred to vlogo je delal kot inženir strojnega učenja za gradnjo in gostovanje modelov. Izven službe rad igra tenis in kolesari po gorskih poteh.

Rupinder Grewal je Sr Ai/ML Specialist Solutions Architect pri AWS. Trenutno se osredotoča na streženje modelov in MLO na SageMakerju. Pred to vlogo je delal kot inženir strojnega učenja za gradnjo in gostovanje modelov. Izven službe rad igra tenis in kolesari po gorskih poteh.

Frank Liu je programski inženir za globoko učenje AWS. Osredotoča se na izdelavo inovativnih orodij za globoko učenje za programske inženirje in znanstvenike. V prostem času uživa na pohodništvu s prijatelji in družino.

Frank Liu je programski inženir za globoko učenje AWS. Osredotoča se na izdelavo inovativnih orodij za globoko učenje za programske inženirje in znanstvenike. V prostem času uživa na pohodništvu s prijatelji in družino.

Alan Tan je višji produktni vodja pri podjetju SageMaker, ki vodi prizadevanja za sklepanje velikih modelov. Navdušen je nad uporabo strojnega učenja na področju analitike. Izven dela uživa na prostem.

Alan Tan je višji produktni vodja pri podjetju SageMaker, ki vodi prizadevanja za sklepanje velikih modelov. Navdušen je nad uporabo strojnega učenja na področju analitike. Izven dela uživa na prostem.

Dhawal Patel je glavni arhitekt strojnega učenja pri AWS. Sodeloval je z organizacijami, od velikih podjetij do srednje velikih zagonskih podjetij, pri problemih, povezanih s porazdeljenim računalništvom in umetno inteligenco. Osredotoča se na poglobljeno učenje, vključno s področja NLP in računalniškega vida. Strankam pomaga doseči visoko zmogljivo sklepanje o modelih na SageMakerju.

Dhawal Patel je glavni arhitekt strojnega učenja pri AWS. Sodeloval je z organizacijami, od velikih podjetij do srednje velikih zagonskih podjetij, pri problemih, povezanih s porazdeljenim računalništvom in umetno inteligenco. Osredotoča se na poglobljeno učenje, vključno s področja NLP in računalniškega vida. Strankam pomaga doseči visoko zmogljivo sklepanje o modelih na SageMakerju.

Qing Lan je inženir za razvoj programske opreme v AWS. Delal je na več zahtevnih izdelkih v Amazonu, vključno z visoko zmogljivimi rešitvami sklepanja ML in visoko zmogljivim sistemom beleženja. Qingova ekipa je uspešno lansirala prvi model z milijardami parametrov v Amazon Advertising z zelo nizko zahtevano zakasnitvijo. Qing ima poglobljeno znanje o optimizaciji infrastrukture in pospeševanju globokega učenja.

Qing Lan je inženir za razvoj programske opreme v AWS. Delal je na več zahtevnih izdelkih v Amazonu, vključno z visoko zmogljivimi rešitvami sklepanja ML in visoko zmogljivim sistemom beleženja. Qingova ekipa je uspešno lansirala prvi model z milijardami parametrov v Amazon Advertising z zelo nizko zahtevano zakasnitvijo. Qing ima poglobljeno znanje o optimizaciji infrastrukture in pospeševanju globokega učenja.

Qingwei Li je strokovnjak za strojno učenje pri Amazon Web Services. Doktoriral je v operacijskem raziskovanju, potem ko je zlomil račun svojega svetovalca za raziskovalno pomoč in mu ni uspel izročiti Nobelove nagrade, ki jo je obljubil. Trenutno strankam v finančni in zavarovalniški industriji pomaga pri izdelavi rešitev strojnega učenja na AWS. V prostem času rad bere in poučuje.

Qingwei Li je strokovnjak za strojno učenje pri Amazon Web Services. Doktoriral je v operacijskem raziskovanju, potem ko je zlomil račun svojega svetovalca za raziskovalno pomoč in mu ni uspel izročiti Nobelove nagrade, ki jo je obljubil. Trenutno strankam v finančni in zavarovalniški industriji pomaga pri izdelavi rešitev strojnega učenja na AWS. V prostem času rad bere in poučuje.

Robert Van Dusen je višji produktni vodja pri Amazon SageMaker. Vodi optimizacijo modela globokega učenja za aplikacije, kot je sklepanje velikih modelov.

Robert Van Dusen je višji produktni vodja pri Amazon SageMaker. Vodi optimizacijo modela globokega učenja za aplikacije, kot je sklepanje velikih modelov.

Siddharth Venkatesan je programski inženir v AWS Deep Learning. Trenutno se osredotoča na gradnjo rešitev za sklepanje velikih modelov. Pred AWS je delal v organizaciji Amazon Grocery in gradil nove plačilne funkcije za stranke po vsem svetu. Izven službe uživa v smučanju, na prostem in spremlja šport.

Siddharth Venkatesan je programski inženir v AWS Deep Learning. Trenutno se osredotoča na gradnjo rešitev za sklepanje velikih modelov. Pred AWS je delal v organizaciji Amazon Grocery in gradil nove plačilne funkcije za stranke po vsem svetu. Izven službe uživa v smučanju, na prostem in spremlja šport.

- Napredno (300)

- AI

- ai art

- ai art generator

- imajo robota

- Amazon SageMaker

- Umetna inteligenca

- certificiranje umetne inteligence

- umetna inteligenca v bančništvu

- robot z umetno inteligenco

- roboti z umetno inteligenco

- programska oprema za umetno inteligenco

- Strojno učenje AWS

- blockchain

- blockchain konferenca ai

- coingenius

- pogovorna umetna inteligenca

- kripto konferenca ai

- dall's

- globoko učenje

- strojno učenje

- platon

- platon ai

- Platonova podatkovna inteligenca

- Igra Platon

- PlatoData

- platogaming

- lestvica ai

- sintaksa

- zefirnet