2022 年 XNUMX 月,Amazon Web Services 添加了 Amazon CloudWatch 中对 NVIDIA GPU 指标的支持,使得可以从 亚马逊 CloudWatch 代理 至 亚马逊CloudWatch 并监控您的代码以获得最佳 GPU 利用率。 从那时起,此功能已集成到我们的许多托管亚马逊系统映像 (AMI) 中,例如 深度学习AMI 和 AWS ParallelCluster AMI。 要获取 GPU 利用率的实例级指标,您可以使用 Packer 或 Amazon ImageBuilder 引导您自己的自定义 AMI 并将其用于各种托管服务产品,例如 AWS批处理, 亚马逊弹性容器服务 (亚马逊 ECS),或 Amazon Elastic Kubernetes服务 (亚马逊 EKS)。 然而,对于许多基于容器的服务产品和工作负载来说,捕获容器、pod 或命名空间级别的利用率指标是理想的选择。

本文详细介绍了如何设置基于容器的 GPU 指标,并提供了从 EKS Pod 收集这些指标的示例。

解决方案概述

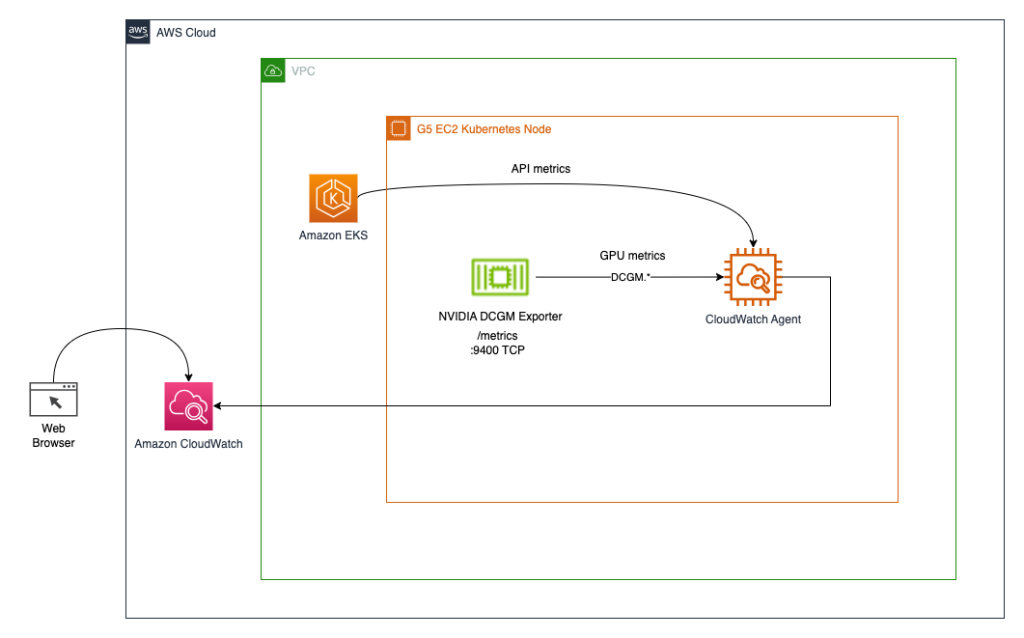

为了演示基于容器的 GPU 指标,我们创建了一个 EKS 集群 g5.2xlarge 实例; 但是,这适用于任何受支持的 NVIDIA 加速实例系列。

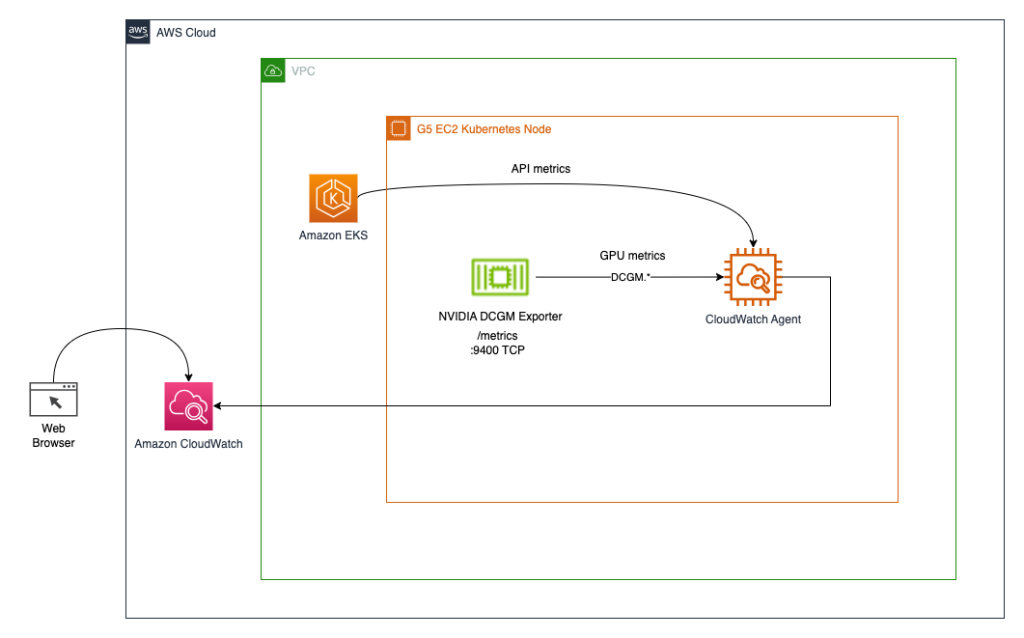

我们部署 NVIDIA GPU 算子以启用 GPU 资源和 NVIDIA DCGM 导出器 启用 GPU 指标收集。 然后我们探索两种架构。 第一个通过 CloudWatch 代理将指标从 NVIDIA DCGM Exporter 连接到 CloudWatch,如下图所示。

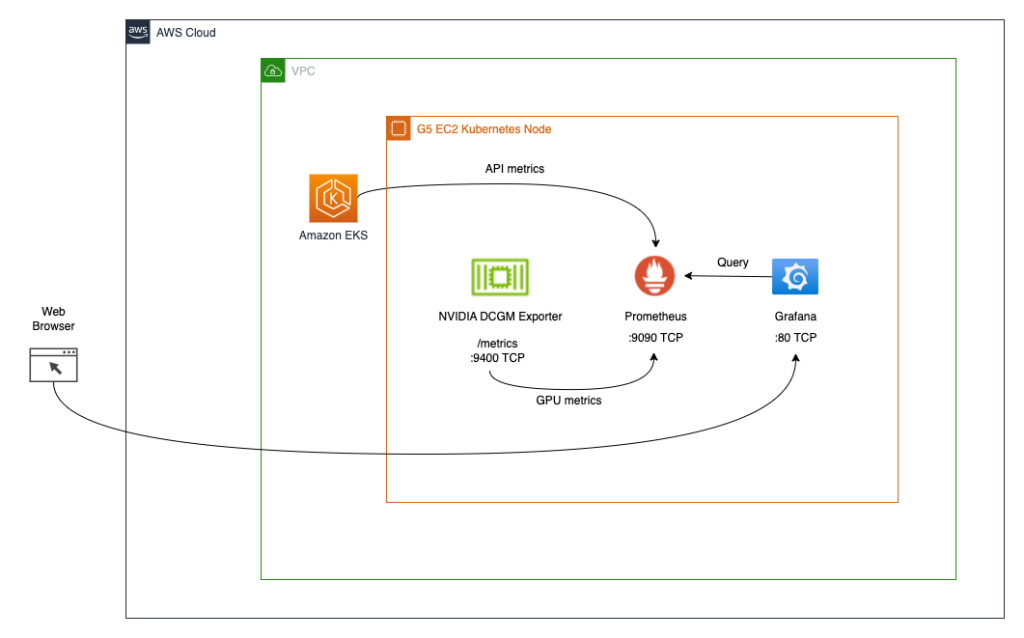

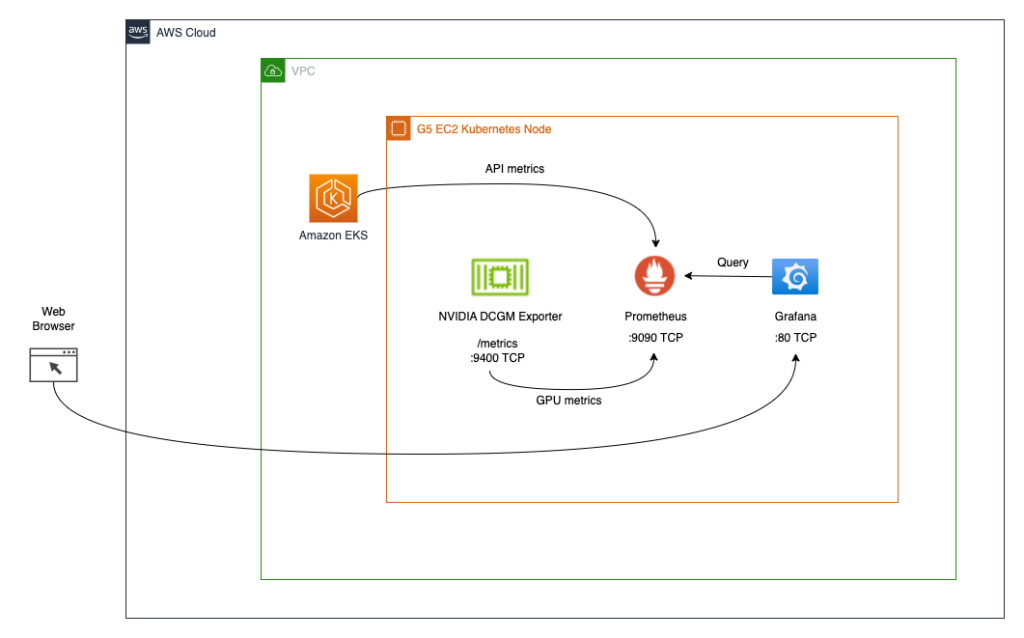

第二种架构(参见下图)将 DCGM Exporter 的指标连接到 普罗米修斯,然后我们使用 格拉法纳 仪表板以可视化这些指标。

先决条件

为了简化重现本文中的整个堆栈,我们使用一个已安装所有必需工具(aws cli、eksctl、helm 等)的容器。 为了克隆 来自 GitHub 的容器项目, 你会需要 混帐。 要构建并运行容器,您需要 码头工人。 要部署该架构,您需要 AWS凭证。 要使用端口转发访问 Kubernetes 服务,您还需要 Kubectl.

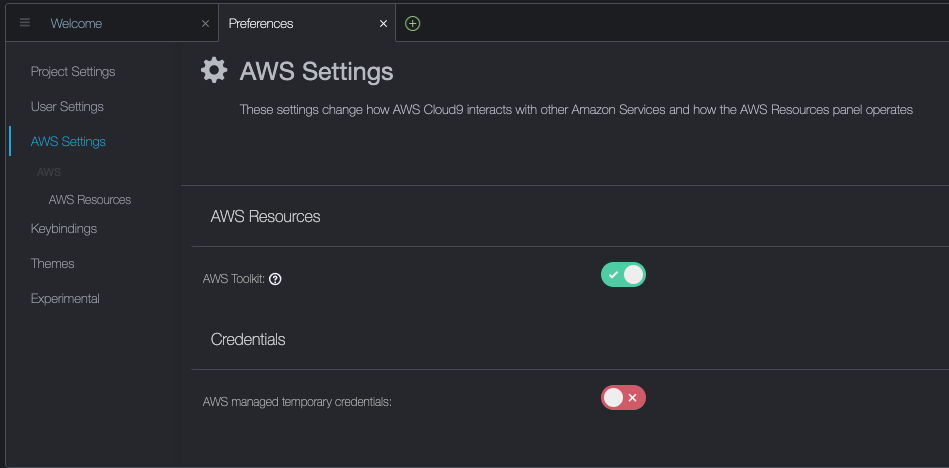

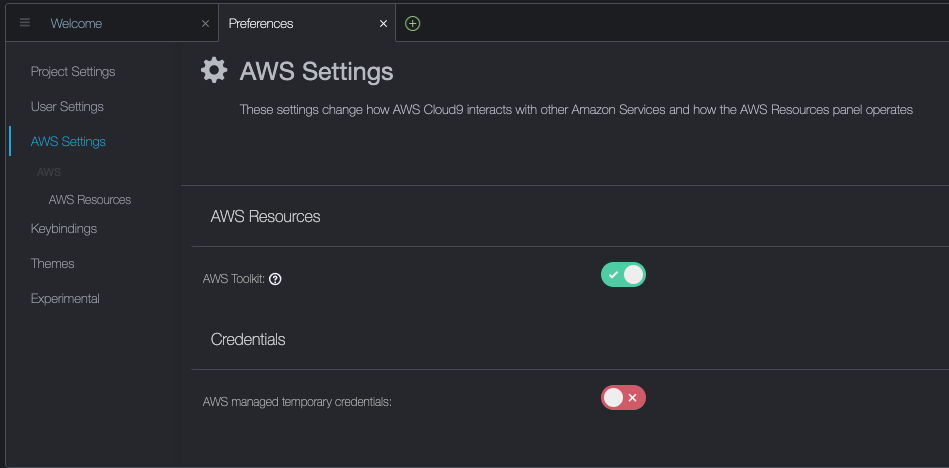

这些先决条件可以安装在您的本地计算机上, EC2实例 不错的 DCV或 AWS 云9。 在这篇文章中,我们将使用 c5.2xlarge Cloud9 实例具有 40GB 本地存储卷。 使用 Cloud9 时,请通过访问禁用 AWS 托管临时凭证 Cloud9->Preferences->AWS Settings 如下面的屏幕截图所示。

构建并运行 aws-do-eks 容器

在您的首选环境中打开终端 shell 并运行以下命令:

git clone https://github.com/aws-samples/aws-do-eks

cd aws-do-eks

./build.sh

./run.sh

./exec.sh

结果如下:

您现在在容器环境中拥有一个 shell,其中包含完成以下任务所需的所有工具。 我们将其称为“aws-do-eks shell”。 除非另有明确说明,您将在此 shell 中运行以下部分中的命令。

创建具有节点组的 EKS 集群

该组包括您选择的 GPU 实例系列; 在这个例子中,我们使用 g5.2xlarge 实例类型。

aws-do-eks 项目 附带一组集群配置。 您可以通过一次配置更改来设置所需的集群配置。

- 在容器外壳中,运行

./env-config.sh 然后设置 CONF=conf/eksctl/yaml/eks-gpu-g5.yaml

- 要验证集群配置,请运行

./eks-config.sh

您应该看到以下集群清单:

apiVersion: eksctl.io/v1alpha5

kind: ClusterConfig

metadata: name: do-eks-yaml-g5 version: "1.25" region: us-east-1

availabilityZones: - us-east-1a - us-east-1b - us-east-1c - us-east-1d

managedNodeGroups: - name: sys instanceType: m5.xlarge desiredCapacity: 1 iam: withAddonPolicies: autoScaler: true cloudWatch: true - name: g5 instanceType: g5.2xlarge instancePrefix: g5-2xl privateNetworking: true efaEnabled: false minSize: 0 desiredCapacity: 1 maxSize: 10 volumeSize: 80 iam: withAddonPolicies: cloudWatch: true

iam: withOIDC: true

- 要创建集群,请在容器中运行以下命令

输出如下:

root@e5ecb162812f:/eks# ./eks-create.sh /eks/impl/eksctl/yaml /eks ./eks-create.sh Mon May 22 20:50:59 UTC 2023

Creating cluster using /eks/conf/eksctl/yaml/eks-gpu-g5.yaml ... eksctl create cluster -f /eks/conf/eksctl/yaml/eks-gpu-g5.yaml 2023-05-22 20:50:59 [ℹ] eksctl version 0.133.0

2023-05-22 20:50:59 [ℹ] using region us-east-1

2023-05-22 20:50:59 [ℹ] subnets for us-east-1a - public:192.168.0.0/19 private:192.168.128.0/19

2023-05-22 20:50:59 [ℹ] subnets for us-east-1b - public:192.168.32.0/19 private:192.168.160.0/19

2023-05-22 20:50:59 [ℹ] subnets for us-east-1c - public:192.168.64.0/19 private:192.168.192.0/19

2023-05-22 20:50:59 [ℹ] subnets for us-east-1d - public:192.168.96.0/19 private:192.168.224.0/19

2023-05-22 20:50:59 [ℹ] nodegroup "sys" will use "" [AmazonLinux2/1.25]

2023-05-22 20:50:59 [ℹ] nodegroup "g5" will use "" [AmazonLinux2/1.25]

2023-05-22 20:50:59 [ℹ] using Kubernetes version 1.25

2023-05-22 20:50:59 [ℹ] creating EKS cluster "do-eks-yaml-g5" in "us-east-1" region with managed nodes

2023-05-22 20:50:59 [ℹ] 2 nodegroups (g5, sys) were included (based on the include/exclude rules)

2023-05-22 20:50:59 [ℹ] will create a CloudFormation stack for cluster itself and 0 nodegroup stack(s)

2023-05-22 20:50:59 [ℹ] will create a CloudFormation stack for cluster itself and 2 managed nodegroup stack(s)

2023-05-22 20:50:59 [ℹ] if you encounter any issues, check CloudFormation console or try 'eksctl utils describe-stacks --region=us-east-1 --cluster=do-eks-yaml-g5'

2023-05-22 20:50:59 [ℹ] Kubernetes API endpoint access will use default of {publicAccess=true, privateAccess=false} for cluster "do-eks-yaml-g5" in "us-east-1"

2023-05-22 20:50:59 [ℹ] CloudWatch logging will not be enabled for cluster "do-eks-yaml-g5" in "us-east-1"

2023-05-22 20:50:59 [ℹ] you can enable it with 'eksctl utils update-cluster-logging --enable-types={SPECIFY-YOUR-LOG-TYPES-HERE (e.g. all)} --region=us-east-1 --cluster=do-eks-yaml-g5'

2023-05-22 20:50:59 [ℹ] 2 sequential tasks: { create cluster control plane "do-eks-yaml-g5", 2 sequential sub-tasks: { 4 sequential sub-tasks: { wait for control plane to become ready, associate IAM OIDC provider, 2 sequential sub-tasks: { create IAM role for serviceaccount "kube-system/aws-node", create serviceaccount "kube-system/aws-node", }, restart daemonset "kube-system/aws-node", }, 2 parallel sub-tasks: { create managed nodegroup "sys", create managed nodegroup "g5", }, } }

2023-05-22 20:50:59 [ℹ] building cluster stack "eksctl-do-eks-yaml-g5-cluster"

2023-05-22 20:51:00 [ℹ] deploying stack "eksctl-do-eks-yaml-g5-cluster"

2023-05-22 20:51:30 [ℹ] waiting for CloudFormation stack "eksctl-do-eks-yaml-g5-cluster"

2023-05-22 20:52:00 [ℹ] waiting for CloudFormation stack "eksctl-do-eks-yaml-g5-cluster"

2023-05-22 20:53:01 [ℹ] waiting for CloudFormation stack "eksctl-do-eks-yaml-g5-cluster"

2023-05-22 20:54:01 [ℹ] waiting for CloudFormation stack "eksctl-do-eks-yaml-g5-cluster"

2023-05-22 20:55:01 [ℹ] waiting for CloudFormation stack "eksctl-do-eks-yaml-g5-cluster"

2023-05-22 20:56:02 [ℹ] waiting for CloudFormation stack "eksctl-do-eks-yaml-g5-cluster"

2023-05-22 20:57:02 [ℹ] waiting for CloudFormation stack "eksctl-do-eks-yaml-g5-cluster"

2023-05-22 20:58:02 [ℹ] waiting for CloudFormation stack "eksctl-do-eks-yaml-g5-cluster"

2023-05-22 20:59:02 [ℹ] waiting for CloudFormation stack "eksctl-do-eks-yaml-g5-cluster"

2023-05-22 21:00:03 [ℹ] waiting for CloudFormation stack "eksctl-do-eks-yaml-g5-cluster"

2023-05-22 21:01:03 [ℹ] waiting for CloudFormation stack "eksctl-do-eks-yaml-g5-cluster"

2023-05-22 21:02:03 [ℹ] waiting for CloudFormation stack "eksctl-do-eks-yaml-g5-cluster"

2023-05-22 21:03:04 [ℹ] waiting for CloudFormation stack "eksctl-do-eks-yaml-g5-cluster"

2023-05-22 21:05:07 [ℹ] building iamserviceaccount stack "eksctl-do-eks-yaml-g5-addon-iamserviceaccount-kube-system-aws-node"

2023-05-22 21:05:10 [ℹ] deploying stack "eksctl-do-eks-yaml-g5-addon-iamserviceaccount-kube-system-aws-node"

2023-05-22 21:05:10 [ℹ] waiting for CloudFormation stack "eksctl-do-eks-yaml-g5-addon-iamserviceaccount-kube-system-aws-node"

2023-05-22 21:05:40 [ℹ] waiting for CloudFormation stack "eksctl-do-eks-yaml-g5-addon-iamserviceaccount-kube-system-aws-node"

2023-05-22 21:05:40 [ℹ] serviceaccount "kube-system/aws-node" already exists

2023-05-22 21:05:41 [ℹ] updated serviceaccount "kube-system/aws-node"

2023-05-22 21:05:41 [ℹ] daemonset "kube-system/aws-node" restarted

2023-05-22 21:05:41 [ℹ] building managed nodegroup stack "eksctl-do-eks-yaml-g5-nodegroup-sys"

2023-05-22 21:05:41 [ℹ] building managed nodegroup stack "eksctl-do-eks-yaml-g5-nodegroup-g5"

2023-05-22 21:05:42 [ℹ] deploying stack "eksctl-do-eks-yaml-g5-nodegroup-sys"

2023-05-22 21:05:42 [ℹ] waiting for CloudFormation stack "eksctl-do-eks-yaml-g5-nodegroup-sys"

2023-05-22 21:05:42 [ℹ] deploying stack "eksctl-do-eks-yaml-g5-nodegroup-g5"

2023-05-22 21:05:42 [ℹ] waiting for CloudFormation stack "eksctl-do-eks-yaml-g5-nodegroup-g5"

2023-05-22 21:06:12 [ℹ] waiting for CloudFormation stack "eksctl-do-eks-yaml-g5-nodegroup-sys"

2023-05-22 21:06:12 [ℹ] waiting for CloudFormation stack "eksctl-do-eks-yaml-g5-nodegroup-g5"

2023-05-22 21:06:55 [ℹ] waiting for CloudFormation stack "eksctl-do-eks-yaml-g5-nodegroup-sys"

2023-05-22 21:07:11 [ℹ] waiting for CloudFormation stack "eksctl-do-eks-yaml-g5-nodegroup-g5"

2023-05-22 21:08:29 [ℹ] waiting for CloudFormation stack "eksctl-do-eks-yaml-g5-nodegroup-g5"

2023-05-22 21:08:45 [ℹ] waiting for CloudFormation stack "eksctl-do-eks-yaml-g5-nodegroup-sys"

2023-05-22 21:09:52 [ℹ] waiting for CloudFormation stack "eksctl-do-eks-yaml-g5-nodegroup-g5"

2023-05-22 21:09:53 [ℹ] waiting for the control plane to become ready

2023-05-22 21:09:53 [✔] saved kubeconfig as "/root/.kube/config"

2023-05-22 21:09:53 [ℹ] 1 task: { install Nvidia device plugin }

W0522 21:09:54.155837 1668 warnings.go:70] spec.template.metadata.annotations[scheduler.alpha.kubernetes.io/critical-pod]: non-functional in v1.16+; use the "priorityClassName" field instead

2023-05-22 21:09:54 [ℹ] created "kube-system:DaemonSet.apps/nvidia-device-plugin-daemonset"

2023-05-22 21:09:54 [ℹ] as you are using the EKS-Optimized Accelerated AMI with a GPU-enabled instance type, the Nvidia Kubernetes device plugin was automatically installed. to skip installing it, use --install-nvidia-plugin=false.

2023-05-22 21:09:54 [✔] all EKS cluster resources for "do-eks-yaml-g5" have been created

2023-05-22 21:09:54 [ℹ] nodegroup "sys" has 1 node(s)

2023-05-22 21:09:54 [ℹ] node "ip-192-168-18-137.ec2.internal" is ready

2023-05-22 21:09:54 [ℹ] waiting for at least 1 node(s) to become ready in "sys"

2023-05-22 21:09:54 [ℹ] nodegroup "sys" has 1 node(s)

2023-05-22 21:09:54 [ℹ] node "ip-192-168-18-137.ec2.internal" is ready

2023-05-22 21:09:55 [ℹ] kubectl command should work with "/root/.kube/config", try 'kubectl get nodes'

2023-05-22 21:09:55 [✔] EKS cluster "do-eks-yaml-g5" in "us-east-1" region is ready Mon May 22 21:09:55 UTC 2023

Done creating cluster using /eks/conf/eksctl/yaml/eks-gpu-g5.yaml /eks

- 要验证集群是否已成功创建,请运行以下命令

kubectl get nodes -L node.kubernetes.io/instance-type

输出类似于以下内容:

NAME STATUS ROLES AGE VERSION INSTANCE_TYPE

ip-192-168-18-137.ec2.internal Ready <none> 47m v1.25.9-eks-0a21954 m5.xlarge

ip-192-168-214-241.ec2.internal Ready <none> 46m v1.25.9-eks-0a21954 g5.2xlarge

在此示例中,我们的集群中有一个 m5.xlarge 和一个 g5.2xlarge 实例; 因此,我们看到前面的输出中列出了两个节点。

在集群创建过程中,将安装 NVIDIA 设备插件。 您需要在创建集群后将其删除,因为我们将使用 NVIDIA GPU 操作员 代替。

- 使用以下命令删除插件

kubectl -n kube-system delete daemonset nvidia-device-plugin-daemonset

我们得到以下输出:

daemonset.apps "nvidia-device-plugin-daemonset" deleted

安装 NVIDIA Helm 存储库

使用以下命令安装 NVIDIA Helm 存储库:

helm repo add nvidia https://helm.ngc.nvidia.com/nvidia && helm repo update

使用 NVIDIA GPU Operator 部署 DCGM 导出器

要部署 DCGM 导出器,请完成以下步骤:

- 准备 DCGM 导出器 GPU 指标配置

curl https://raw.githubusercontent.com/NVIDIA/dcgm-exporter/main/etc/dcp-metrics-included.csv > dcgm-metrics.csv

您可以选择编辑 dcgm-metrics.csv 文件。 您可以根据需要添加或删除任何指标。

- 创建 gpu-operator 命名空间和 DCGM 导出器 ConfigMap

kubectl create namespace gpu-operator && /

kubectl create configmap metrics-config -n gpu-operator --from-file=dcgm-metrics.csv

输出如下:

namespace/gpu-operator created

configmap/metrics-config created

- 将 GPU 算子应用到 EKS 集群

helm install --wait --generate-name -n gpu-operator --create-namespace nvidia/gpu-operator --set dcgmExporter.config.name=metrics-config --set dcgmExporter.env[0].name=DCGM_EXPORTER_COLLECTORS --set dcgmExporter.env[0].value=/etc/dcgm-exporter/dcgm-metrics.csv --set toolkit.enabled=false

输出如下:

NAME: gpu-operator-1684795140

LAST DEPLOYED: Day Month Date HH:mm:ss YYYY

NAMESPACE: gpu-operator

STATUS: deployed

REVISION: 1

TEST SUITE: None

- 确认 DCGM 导出器 Pod 正在运行

kubectl -n gpu-operator get pods | grep dcgm

输出如下:

nvidia-dcgm-exporter-lkmfr 1/1 Running 0 1m

如果您检查日志,您应该看到 “Starting webserver” 信息:

kubectl -n gpu-operator logs -f $(kubectl -n gpu-operator get pods | grep dcgm | cut -d ' ' -f 1)

输出如下:

Defaulted container "nvidia-dcgm-exporter" out of: nvidia-dcgm-exporter, toolkit-validation (init)

time="2023-05-22T22:40:08Z" level=info msg="Starting dcgm-exporter"

time="2023-05-22T22:40:08Z" level=info msg="DCGM successfully initialized!"

time="2023-05-22T22:40:08Z" level=info msg="Collecting DCP Metrics"

time="2023-05-22T22:40:08Z" level=info msg="No configmap data specified, falling back to metric file /etc/dcgm-exporter/dcgm-metrics.csv"

time="2023-05-22T22:40:08Z" level=info msg="Initializing system entities of type: GPU"

time="2023-05-22T22:40:09Z" level=info msg="Initializing system entities of type: NvSwitch"

time="2023-05-22T22:40:09Z" level=info msg="Not collecting switch metrics: no switches to monitor"

time="2023-05-22T22:40:09Z" level=info msg="Initializing system entities of type: NvLink"

time="2023-05-22T22:40:09Z" level=info msg="Not collecting link metrics: no switches to monitor"

time="2023-05-22T22:40:09Z" level=info msg="Kubernetes metrics collection enabled!"

time="2023-05-22T22:40:09Z" level=info msg="Pipeline starting"

time="2023-05-22T22:40:09Z" level=info msg="Starting webserver"

NVIDIA DCGM Exporter 公开了一个 Prometheus 指标端点,可由 CloudWatch 代理摄取。 要查看端点,请使用以下命令:

kubectl -n gpu-operator get services | grep dcgm

我们得到以下输出:

nvidia-dcgm-exporter ClusterIP 10.100.183.207 <none> 9400/TCP 10m

- 为了产生一些 GPU 利用率,我们部署了一个运行以下命令的 pod GPU 烧录 二进制

kubectl apply -f https://raw.githubusercontent.com/aws-samples/aws-do-eks/main/Container-Root/eks/deployment/gpu-metrics/gpu-burn-deployment.yaml

输出如下:

deployment.apps/gpu-burn created

此部署使用单个 GPU 产生 100 秒 20% 利用率,随后 0 秒 20% 利用率的连续模式。

- 为了确保端点正常工作,您可以运行一个临时容器,使用curl来读取

http://nvidia-dcgm-exporter:9400/metrics

kubectl -n gpu-operator run -it --rm curl --restart='Never' --image=curlimages/curl --command -- curl http://nvidia-dcgm-exporter:9400/metrics

我们得到以下输出:

# HELP DCGM_FI_DEV_SM_CLOCK SM clock frequency (in MHz).

# TYPE DCGM_FI_DEV_SM_CLOCK gauge

DCGM_FI_DEV_SM_CLOCK{gpu="0",UUID="GPU-ff76466b-22fc-f7a9-abe2-ce3ac453b8b3",device="nvidia0",modelName="NVIDIA A10G",Hostname="nvidia-dcgm-exporter-48cwd",DCGM_FI_DRIVER_VERSION="470.182.03",container="main",namespace="kube-system",pod="gpu-burn-c68d8c774-ltg9s"} 1455

# HELP DCGM_FI_DEV_MEM_CLOCK Memory clock frequency (in MHz).

# TYPE DCGM_FI_DEV_MEM_CLOCK gauge

DCGM_FI_DEV_MEM_CLOCK{gpu="0",UUID="GPU-ff76466b-22fc-f7a9-abe2-ce3ac453b8b3",device="nvidia0",modelName="NVIDIA A10G",Hostname="nvidia-dcgm-exporter-48cwd",DCGM_FI_DRIVER_VERSION="470.182.03",container="main",namespace="kube-system",pod="gpu-burn-c68d8c774-ltg9s"} 6250

# HELP DCGM_FI_DEV_GPU_TEMP GPU temperature (in C).

# TYPE DCGM_FI_DEV_GPU_TEMP gauge

DCGM_FI_DEV_GPU_TEMP{gpu="0",UUID="GPU-ff76466b-22fc-f7a9-abe2-ce3ac453b8b3",device="nvidia0",modelName="NVIDIA A10G",Hostname="nvidia-dcgm-exporter-48cwd",DCGM_FI_DRIVER_VERSION="470.182.03",container="main",namespace="kube-system",pod="gpu-burn-c68d8c774-ltg9s"} 65

# HELP DCGM_FI_DEV_POWER_USAGE Power draw (in W).

# TYPE DCGM_FI_DEV_POWER_USAGE gauge

DCGM_FI_DEV_POWER_USAGE{gpu="0",UUID="GPU-ff76466b-22fc-f7a9-abe2-ce3ac453b8b3",device="nvidia0",modelName="NVIDIA A10G",Hostname="nvidia-dcgm-exporter-48cwd",DCGM_FI_DRIVER_VERSION="470.182.03",container="main",namespace="kube-system",pod="gpu-burn-c68d8c774-ltg9s"} 299.437000

# HELP DCGM_FI_DEV_TOTAL_ENERGY_CONSUMPTION Total energy consumption since boot (in mJ).

# TYPE DCGM_FI_DEV_TOTAL_ENERGY_CONSUMPTION counter

DCGM_FI_DEV_TOTAL_ENERGY_CONSUMPTION{gpu="0",UUID="GPU-ff76466b-22fc-f7a9-abe2-ce3ac453b8b3",device="nvidia0",modelName="NVIDIA A10G",Hostname="nvidia-dcgm-exporter-48cwd",DCGM_FI_DRIVER_VERSION="470.182.03",container="main",namespace="kube-system",pod="gpu-burn-c68d8c774-ltg9s"} 15782796862

# HELP DCGM_FI_DEV_PCIE_REPLAY_COUNTER Total number of PCIe retries.

# TYPE DCGM_FI_DEV_PCIE_REPLAY_COUNTER counter

DCGM_FI_DEV_PCIE_REPLAY_COUNTER{gpu="0",UUID="GPU-ff76466b-22fc-f7a9-abe2-ce3ac453b8b3",device="nvidia0",modelName="NVIDIA A10G",Hostname="nvidia-dcgm-exporter-48cwd",DCGM_FI_DRIVER_VERSION="470.182.03",container="main",namespace="kube-system",pod="gpu-burn-c68d8c774-ltg9s"} 0

# HELP DCGM_FI_DEV_GPU_UTIL GPU utilization (in %).

# TYPE DCGM_FI_DEV_GPU_UTIL gauge

DCGM_FI_DEV_GPU_UTIL{gpu="0",UUID="GPU-ff76466b-22fc-f7a9-abe2-ce3ac453b8b3",device="nvidia0",modelName="NVIDIA A10G",Hostname="nvidia-dcgm-exporter-48cwd",DCGM_FI_DRIVER_VERSION="470.182.03",container="main",namespace="kube-system",pod="gpu-burn-c68d8c774-ltg9s"} 100

# HELP DCGM_FI_DEV_MEM_COPY_UTIL Memory utilization (in %).

# TYPE DCGM_FI_DEV_MEM_COPY_UTIL gauge

DCGM_FI_DEV_MEM_COPY_UTIL{gpu="0",UUID="GPU-ff76466b-22fc-f7a9-abe2-ce3ac453b8b3",device="nvidia0",modelName="NVIDIA A10G",Hostname="nvidia-dcgm-exporter-48cwd",DCGM_FI_DRIVER_VERSION="470.182.03",container="main",namespace="kube-system",pod="gpu-burn-c68d8c774-ltg9s"} 38

# HELP DCGM_FI_DEV_ENC_UTIL Encoder utilization (in %).

# TYPE DCGM_FI_DEV_ENC_UTIL gauge

DCGM_FI_DEV_ENC_UTIL{gpu="0",UUID="GPU-ff76466b-22fc-f7a9-abe2-ce3ac453b8b3",device="nvidia0",modelName="NVIDIA A10G",Hostname="nvidia-dcgm-exporter-48cwd",DCGM_FI_DRIVER_VERSION="470.182.03",container="main",namespace="kube-system",pod="gpu-burn-c68d8c774-ltg9s"} 0

# HELP DCGM_FI_DEV_DEC_UTIL Decoder utilization (in %).

# TYPE DCGM_FI_DEV_DEC_UTIL gauge

DCGM_FI_DEV_DEC_UTIL{gpu="0",UUID="GPU-ff76466b-22fc-f7a9-abe2-ce3ac453b8b3",device="nvidia0",modelName="NVIDIA A10G",Hostname="nvidia-dcgm-exporter-48cwd",DCGM_FI_DRIVER_VERSION="470.182.03",container="main",namespace="kube-system",pod="gpu-burn-c68d8c774-ltg9s"} 0

# HELP DCGM_FI_DEV_XID_ERRORS Value of the last XID error encountered.

# TYPE DCGM_FI_DEV_XID_ERRORS gauge

DCGM_FI_DEV_XID_ERRORS{gpu="0",UUID="GPU-ff76466b-22fc-f7a9-abe2-ce3ac453b8b3",device="nvidia0",modelName="NVIDIA A10G",Hostname="nvidia-dcgm-exporter-48cwd",DCGM_FI_DRIVER_VERSION="470.182.03",container="main",namespace="kube-system",pod="gpu-burn-c68d8c774-ltg9s"} 0

# HELP DCGM_FI_DEV_FB_FREE Framebuffer memory free (in MiB).

# TYPE DCGM_FI_DEV_FB_FREE gauge

DCGM_FI_DEV_FB_FREE{gpu="0",UUID="GPU-ff76466b-22fc-f7a9-abe2-ce3ac453b8b3",device="nvidia0",modelName="NVIDIA A10G",Hostname="nvidia-dcgm-exporter-48cwd",DCGM_FI_DRIVER_VERSION="470.182.03",container="main",namespace="kube-system",pod="gpu-burn-c68d8c774-ltg9s"} 2230

# HELP DCGM_FI_DEV_FB_USED Framebuffer memory used (in MiB).

# TYPE DCGM_FI_DEV_FB_USED gauge

DCGM_FI_DEV_FB_USED{gpu="0",UUID="GPU-ff76466b-22fc-f7a9-abe2-ce3ac453b8b3",device="nvidia0",modelName="NVIDIA A10G",Hostname="nvidia-dcgm-exporter-48cwd",DCGM_FI_DRIVER_VERSION="470.182.03",container="main",namespace="kube-system",pod="gpu-burn-c68d8c774-ltg9s"} 20501

# HELP DCGM_FI_DEV_NVLINK_BANDWIDTH_TOTAL Total number of NVLink bandwidth counters for all lanes.

# TYPE DCGM_FI_DEV_NVLINK_BANDWIDTH_TOTAL counter

DCGM_FI_DEV_NVLINK_BANDWIDTH_TOTAL{gpu="0",UUID="GPU-ff76466b-22fc-f7a9-abe2-ce3ac453b8b3",device="nvidia0",modelName="NVIDIA A10G",Hostname="nvidia-dcgm-exporter-48cwd",DCGM_FI_DRIVER_VERSION="470.182.03",container="main",namespace="kube-system",pod="gpu-burn-c68d8c774-ltg9s"} 0

# HELP DCGM_FI_DEV_VGPU_LICENSE_STATUS vGPU License status

# TYPE DCGM_FI_DEV_VGPU_LICENSE_STATUS gauge

DCGM_FI_DEV_VGPU_LICENSE_STATUS{gpu="0",UUID="GPU-ff76466b-22fc-f7a9-abe2-ce3ac453b8b3",device="nvidia0",modelName="NVIDIA A10G",Hostname="nvidia-dcgm-exporter-48cwd",DCGM_FI_DRIVER_VERSION="470.182.03",container="main",namespace="kube-system",pod="gpu-burn-c68d8c774-ltg9s"} 0

# HELP DCGM_FI_DEV_UNCORRECTABLE_REMAPPED_ROWS Number of remapped rows for uncorrectable errors

# TYPE DCGM_FI_DEV_UNCORRECTABLE_REMAPPED_ROWS counter

DCGM_FI_DEV_UNCORRECTABLE_REMAPPED_ROWS{gpu="0",UUID="GPU-ff76466b-22fc-f7a9-abe2-ce3ac453b8b3",device="nvidia0",modelName="NVIDIA A10G",Hostname="nvidia-dcgm-exporter-48cwd",DCGM_FI_DRIVER_VERSION="470.182.03",container="main",namespace="kube-system",pod="gpu-burn-c68d8c774-ltg9s"} 0

# HELP DCGM_FI_DEV_CORRECTABLE_REMAPPED_ROWS Number of remapped rows for correctable errors

# TYPE DCGM_FI_DEV_CORRECTABLE_REMAPPED_ROWS counter

DCGM_FI_DEV_CORRECTABLE_REMAPPED_ROWS{gpu="0",UUID="GPU-ff76466b-22fc-f7a9-abe2-ce3ac453b8b3",device="nvidia0",modelName="NVIDIA A10G",Hostname="nvidia-dcgm-exporter-48cwd",DCGM_FI_DRIVER_VERSION="470.182.03",container="main",namespace="kube-system",pod="gpu-burn-c68d8c774-ltg9s"} 0

# HELP DCGM_FI_DEV_ROW_REMAP_FAILURE Whether remapping of rows has failed

# TYPE DCGM_FI_DEV_ROW_REMAP_FAILURE gauge

DCGM_FI_DEV_ROW_REMAP_FAILURE{gpu="0",UUID="GPU-ff76466b-22fc-f7a9-abe2-ce3ac453b8b3",device="nvidia0",modelName="NVIDIA A10G",Hostname="nvidia-dcgm-exporter-48cwd",DCGM_FI_DRIVER_VERSION="470.182.03",container="main",namespace="kube-system",pod="gpu-burn-c68d8c774-ltg9s"} 0

# HELP DCGM_FI_PROF_GR_ENGINE_ACTIVE Ratio of time the graphics engine is active (in %).

# TYPE DCGM_FI_PROF_GR_ENGINE_ACTIVE gauge

DCGM_FI_PROF_GR_ENGINE_ACTIVE{gpu="0",UUID="GPU-ff76466b-22fc-f7a9-abe2-ce3ac453b8b3",device="nvidia0",modelName="NVIDIA A10G",Hostname="nvidia-dcgm-exporter-48cwd",DCGM_FI_DRIVER_VERSION="470.182.03",container="main",namespace="kube-system",pod="gpu-burn-c68d8c774-ltg9s"} 0.808369

# HELP DCGM_FI_PROF_PIPE_TENSOR_ACTIVE Ratio of cycles the tensor (HMMA) pipe is active (in %).

# TYPE DCGM_FI_PROF_PIPE_TENSOR_ACTIVE gauge

DCGM_FI_PROF_PIPE_TENSOR_ACTIVE{gpu="0",UUID="GPU-ff76466b-22fc-f7a9-abe2-ce3ac453b8b3",device="nvidia0",modelName="NVIDIA A10G",Hostname="nvidia-dcgm-exporter-48cwd",DCGM_FI_DRIVER_VERSION="470.182.03",container="main",namespace="kube-system",pod="gpu-burn-c68d8c774-ltg9s"} 0.000000

# HELP DCGM_FI_PROF_DRAM_ACTIVE Ratio of cycles the device memory interface is active sending or receiving data (in %).

# TYPE DCGM_FI_PROF_DRAM_ACTIVE gauge

DCGM_FI_PROF_DRAM_ACTIVE{gpu="0",UUID="GPU-ff76466b-22fc-f7a9-abe2-ce3ac453b8b3",device="nvidia0",modelName="NVIDIA A10G",Hostname="nvidia-dcgm-exporter-48cwd",DCGM_FI_DRIVER_VERSION="470.182.03",container="main",namespace="kube-system",pod="gpu-burn-c68d8c774-ltg9s"} 0.315787

# HELP DCGM_FI_PROF_PCIE_TX_BYTES The rate of data transmitted over the PCIe bus - including both protocol headers and data payloads - in bytes per second.

# TYPE DCGM_FI_PROF_PCIE_TX_BYTES gauge

DCGM_FI_PROF_PCIE_TX_BYTES{gpu="0",UUID="GPU-ff76466b-22fc-f7a9-abe2-ce3ac453b8b3",device="nvidia0",modelName="NVIDIA A10G",Hostname="nvidia-dcgm-exporter-48cwd",DCGM_FI_DRIVER_VERSION="470.182.03",container="main",namespace="kube-system",pod="gpu-burn-c68d8c774-ltg9s"} 3985328

# HELP DCGM_FI_PROF_PCIE_RX_BYTES The rate of data received over the PCIe bus - including both protocol headers and data payloads - in bytes per second.

# TYPE DCGM_FI_PROF_PCIE_RX_BYTES gauge

DCGM_FI_PROF_PCIE_RX_BYTES{gpu="0",UUID="GPU-ff76466b-22fc-f7a9-abe2-ce3ac453b8b3",device="nvidia0",modelName="NVIDIA A10G",Hostname="nvidia-dcgm-exporter-48cwd",DCGM_FI_DRIVER_VERSION="470.182.03",container="main",namespace="kube-system",pod="gpu-burn-c68d8c774-ltg9s"} 21715174

pod "curl" deleted

配置和部署 CloudWatch 代理

要配置和部署 CloudWatch 代理,请完成以下步骤:

- 下载 YAML 文件并编辑它

curl -O https://raw.githubusercontent.com/aws-samples/amazon-cloudwatch-container-insights/k8s/1.3.15/k8s-deployment-manifest-templates/deployment-mode/service/cwagent-prometheus/prometheus-eks.yaml

该文件包含一个 cwagent configmap 的网络 prometheus configmap。 对于这篇文章,我们编辑了两者。

- 编辑

prometheus-eks.yaml 文件

打开 prometheus-eks.yaml 文件在您最喜欢的编辑器中并替换 cwagentconfig.json 包含以下内容的部分:

apiVersion: v1

data: # cwagent json config cwagentconfig.json: | { "logs": { "metrics_collected": { "prometheus": { "prometheus_config_path": "/etc/prometheusconfig/prometheus.yaml", "emf_processor": { "metric_declaration": [ { "source_labels": ["Service"], "label_matcher": ".*dcgm.*", "dimensions": [["Service","Namespace","ClusterName","job","pod"]], "metric_selectors": [ "^DCGM_FI_DEV_GPU_UTIL$", "^DCGM_FI_DEV_DEC_UTIL$", "^DCGM_FI_DEV_ENC_UTIL$", "^DCGM_FI_DEV_MEM_CLOCK$", "^DCGM_FI_DEV_MEM_COPY_UTIL$", "^DCGM_FI_DEV_POWER_USAGE$", "^DCGM_FI_DEV_ROW_REMAP_FAILURE$", "^DCGM_FI_DEV_SM_CLOCK$", "^DCGM_FI_DEV_XID_ERRORS$", "^DCGM_FI_PROF_DRAM_ACTIVE$", "^DCGM_FI_PROF_GR_ENGINE_ACTIVE$", "^DCGM_FI_PROF_PCIE_RX_BYTES$", "^DCGM_FI_PROF_PCIE_TX_BYTES$", "^DCGM_FI_PROF_PIPE_TENSOR_ACTIVE$" ] } ] } } }, "force_flush_interval": 5 } }

- 在

prometheus 配置部分,为 DCGM 导出器附加以下作业定义

- job_name: 'kubernetes-pod-dcgm-exporter' sample_limit: 10000 metrics_path: /api/v1/metrics/prometheus kubernetes_sd_configs: - role: pod relabel_configs: - source_labels: [__meta_kubernetes_pod_container_name] action: keep regex: '^DCGM.*$' - source_labels: [__address__] action: replace regex: ([^:]+)(?::d+)? replacement: ${1}:9400 target_label: __address__ - action: labelmap regex: __meta_kubernetes_pod_label_(.+) - action: replace source_labels: - __meta_kubernetes_namespace target_label: Namespace - source_labels: [__meta_kubernetes_pod] action: replace target_label: pod - action: replace source_labels: - __meta_kubernetes_pod_container_name target_label: container_name - action: replace source_labels: - __meta_kubernetes_pod_controller_name target_label: pod_controller_name - action: replace source_labels: - __meta_kubernetes_pod_controller_kind target_label: pod_controller_kind - action: replace source_labels: - __meta_kubernetes_pod_phase target_label: pod_phase - action: replace source_labels: - __meta_kubernetes_pod_node_name target_label: NodeName

- 保存文件并应用

cwagent-dcgm 配置到您的集群

kubectl apply -f ./prometheus-eks.yaml

我们得到以下输出:

namespace/amazon-cloudwatch created

configmap/prometheus-cwagentconfig created

configmap/prometheus-config created

serviceaccount/cwagent-prometheus created

clusterrole.rbac.authorization.k8s.io/cwagent-prometheus-role created

clusterrolebinding.rbac.authorization.k8s.io/cwagent-prometheus-role-binding created

deployment.apps/cwagent-prometheus created

- 确认 CloudWatch 代理 Pod 正在运行

kubectl -n amazon-cloudwatch get pods

我们得到以下输出:

NAME READY STATUS RESTARTS AGE

cwagent-prometheus-7dfd69cc46-s4cx7 1/1 Running 0 15m

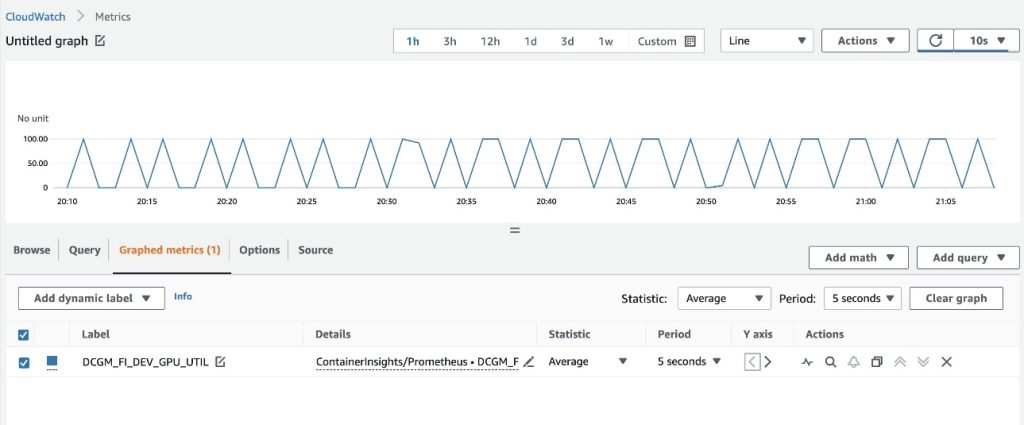

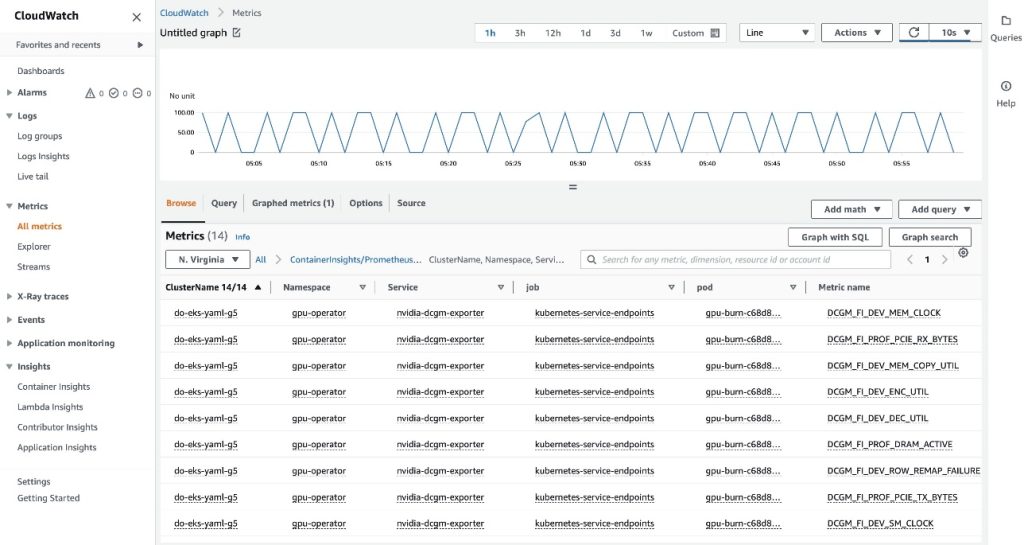

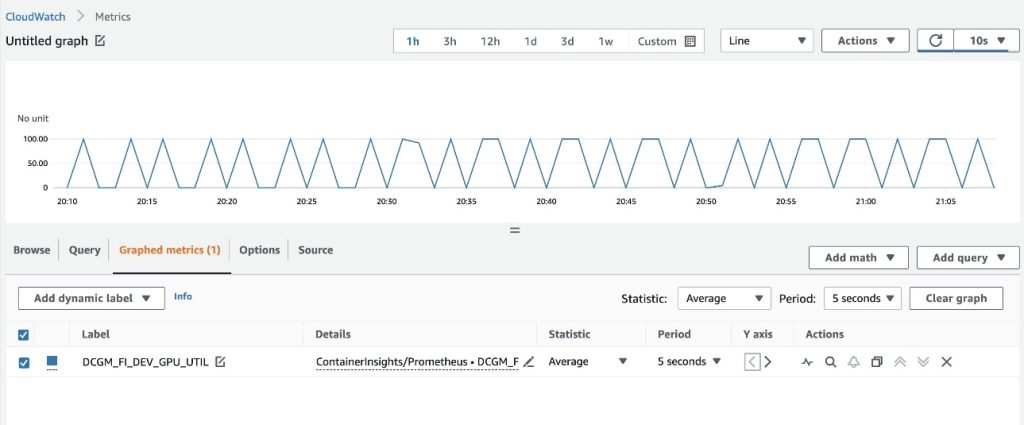

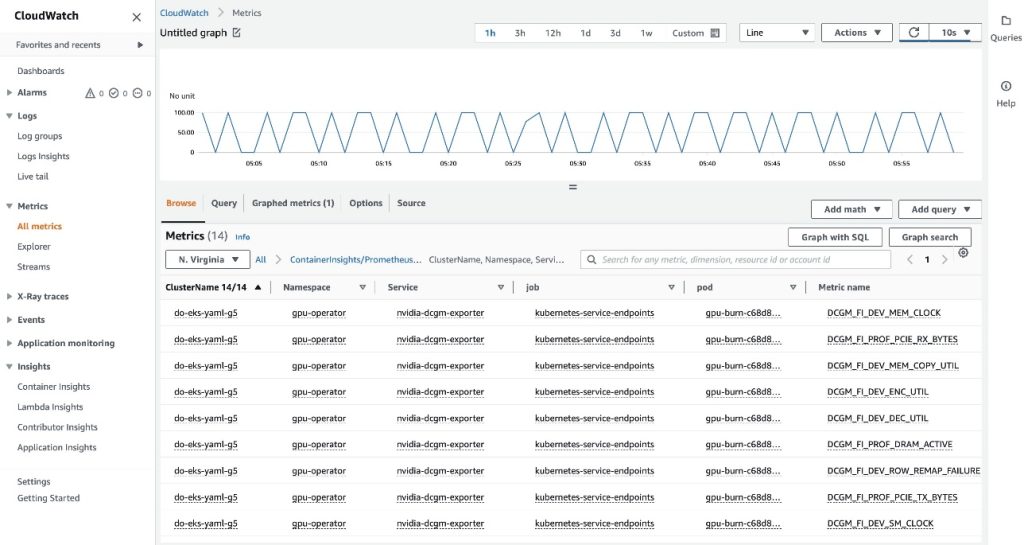

在 CloudWatch 控制台上可视化指标

要在 CloudWatch 中可视化指标,请完成以下步骤:

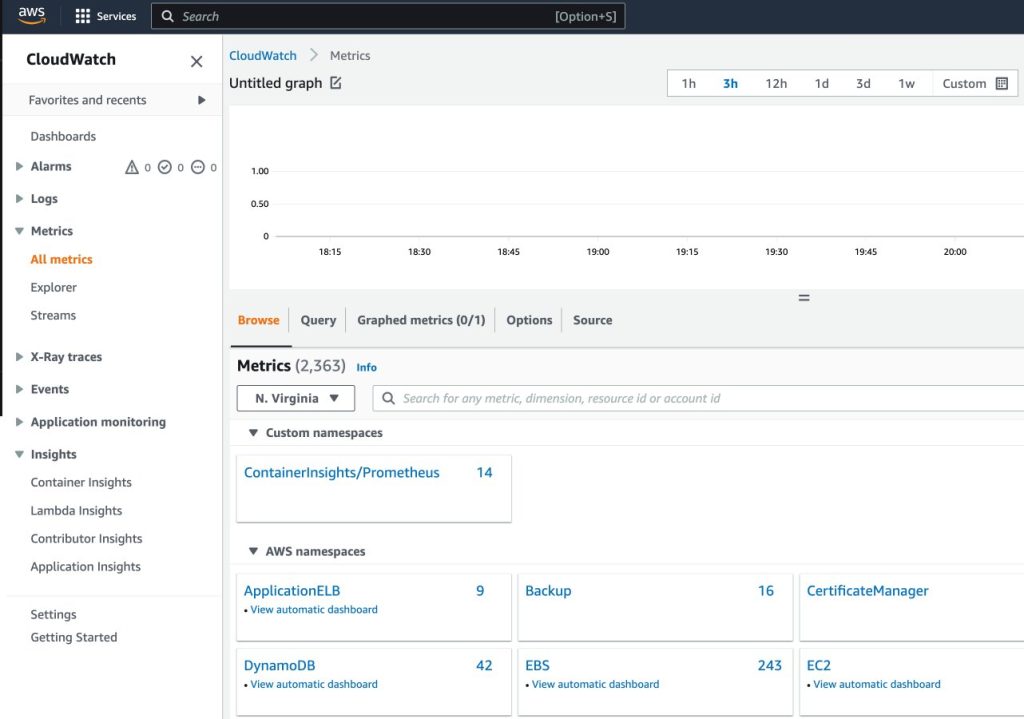

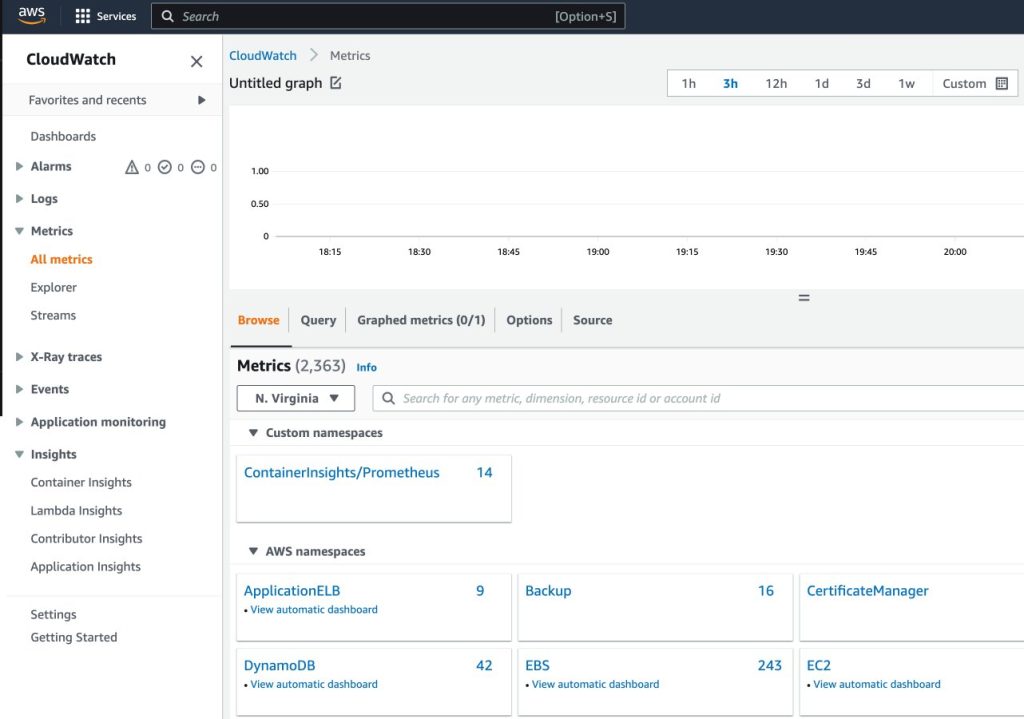

- 在CloudWatch控制台上, 指标 在导航窗格中,选择 所有指标

- 在 自定义命名空间 部分,选择新条目 ContainerInsights/普罗米修斯

如需了解更多信息 ContainerInsights/普罗米修斯 命名空间,参考 抓取额外的 Prometheus 源并导入这些指标.

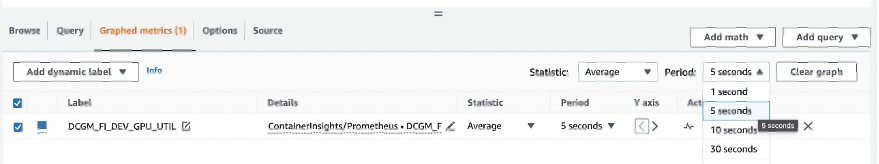

- 深入查看指标名称并选择

DCGM_FI_DEV_GPU_UTIL

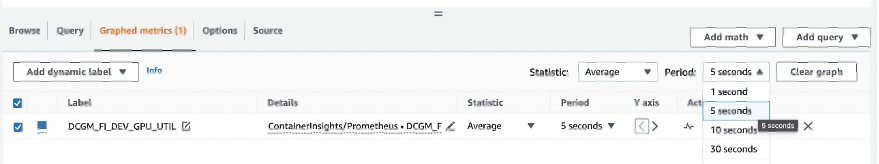

- 点击 图表指标 标签,设置 周期 至 5秒

- 设置刷新间隔为10秒

您将看到从 DCGM 导出器收集的指标,这些指标可视化 gpu-burn 每 20 秒打开和关闭一次模式。

点击 浏览 选项卡中,您可以查看数据,包括每个指标的 Pod 名称。

EKS API 元数据已与 DCGM 指标数据相结合,从而生成所提供的基于 Pod 的 GPU 指标。

通过 CloudWatch 代理将 DCGM 指标导出到 CloudWatch 的第一种方法到此结束。

在下一节中,我们配置第二个架构,它将 DCGM 指标导出到 Prometheus,并使用 Grafana 对其进行可视化。

使用 Prometheus 和 Grafana 可视化 DCGM 中的 GPU 指标

完成以下步骤:

- 添加 Prometheus 社区 helm 图表

helm repo add prometheus-community https://prometheus-community.github.io/helm-charts

此图表部署了 Prometheus 和 Grafana。 在运行安装命令之前,我们需要对图表进行一些编辑。

- 将图表配置值保存到文件中

/tmp

helm inspect values prometheus-community/kube-prometheus-stack > /tmp/kube-prometheus-stack.values

- 编辑char配置文件

编辑保存的文件(/tmp/kube-prometheus-stack.values)并通过查找设置名称并设置值来设置以下选项:

prometheus.prometheusSpec.serviceMonitorSelectorNilUsesHelmValues=false

- 将以下 ConfigMap 添加到

additionalScrapeConfigs 部分

additionalScrapeConfigs:

- job_name: gpu-metrics scrape_interval: 1s metrics_path: /metrics scheme: http kubernetes_sd_configs: - role: endpoints namespaces: names: - gpu-operator relabel_configs: - source_labels: [__meta_kubernetes_pod_node_name] action: replace target_label: kubernetes_node

- 使用更新后的值部署 Prometheus 堆栈

helm install prometheus-community/kube-prometheus-stack

--create-namespace --namespace prometheus

--generate-name

--values /tmp/kube-prometheus-stack.values

我们得到以下输出:

NAME: kube-prometheus-stack-1684965548

LAST DEPLOYED: Wed May 24 21:59:14 2023

NAMESPACE: prometheus

STATUS: deployed

REVISION: 1

NOTES:

kube-prometheus-stack has been installed. Check its status by running: kubectl --namespace prometheus get pods -l "release=kube-prometheus-stack-1684965548" Visit https://github.com/prometheus-operator/kube-prometheus for instructions on how to create & configure Alertmanager and Prometheus instances using the Operator.

- 确认 Prometheus Pod 正在运行

kubectl get pods -n prometheus

我们得到以下输出:

NAME READY STATUS RESTARTS AGE

alertmanager-kube-prometheus-stack-1684-alertmanager-0 2/2 Running 0 6m55s

kube-prometheus-stack-1684-operator-6c87649878-j7v55 1/1 Running 0 6m58s

kube-prometheus-stack-1684965548-grafana-dcd7b4c96-bzm8p 3/3 Running 0 6m58s

kube-prometheus-stack-1684965548-kube-state-metrics-7d856dptlj5 1/1 Running 0 6m58s

kube-prometheus-stack-1684965548-prometheus-node-exporter-2fbl5 1/1 Running 0 6m58s

kube-prometheus-stack-1684965548-prometheus-node-exporter-m7zmv 1/1 Running 0 6m58s

prometheus-kube-prometheus-stack-1684-prometheus-0 2/2 Running 0 6m55s

Prometheus 和 Grafana pod 位于 Running 州。

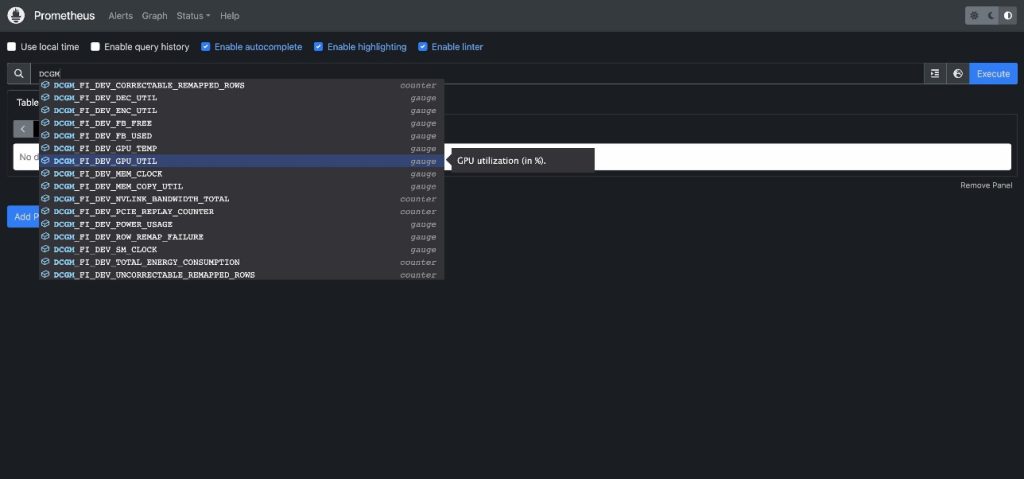

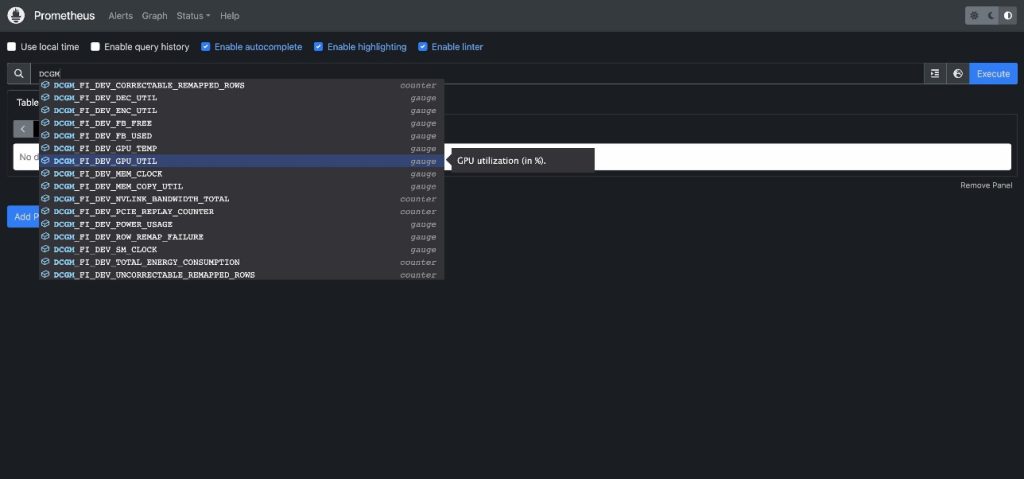

接下来,我们验证 DCGM 指标是否流入 Prometheus。

- 移植 Prometheus UI

有多种方法可以将 EKS 中运行的 Prometheus UI 公开给来自集群外部的请求。 我们将使用 kubectl port-forwarding。 到目前为止,我们一直在执行命令 aws-do-eks 容器。 为了访问集群中运行的 Prometheus 服务,我们将从主机创建一条隧道。 这里的 aws-do-eks 通过在容器外部、主机上的新终端 shell 中执行以下命令来运行容器。 我们将其称为“主机外壳”。

kubectl -n prometheus port-forward svc/$(kubectl -n prometheus get svc | grep prometheus | grep -v alertmanager | grep -v operator | grep -v grafana | grep -v metrics | grep -v exporter | grep -v operated | cut -d ' ' -f 1) 8080:9090 &

当端口转发进程运行时,我们可以从主机访问 Prometheus UI,如下所述。

- 打开普罗米修斯用户界面

- 如果您使用的是Cloud9,请导航至

Preview->Preview Running Application 在 Cloud9 IDE 内的选项卡中打开 Prometheus UI,然后单击  选项卡右上角的图标会在新窗口中弹出。

选项卡右上角的图标会在新窗口中弹出。

- 如果您位于本地主机上或通过远程桌面连接到 EC2 实例,请打开浏览器并访问 URL

http://localhost:8080.

- 输入

DCGM 查看流入 Prometheus 的 DCGM 指标

- 选择

DCGM_FI_DEV_GPU_UTIL,选择 执行,然后导航到 图表 选项卡可查看预期的 GPU 使用模式

- 停止 Prometheus 端口转发进程

在主机 shell 中运行以下命令行:

kill -9 $(ps -aef | grep port-forward | grep -v grep | grep prometheus | awk '{print $2}')

现在我们可以通过 Grafana Dashboard 可视化 DCGM 指标。

- 找回登录Grafana UI的密码

kubectl -n prometheus get secret $(kubectl -n prometheus get secrets | grep grafana | cut -d ' ' -f 1) -o jsonpath="{.data.admin-password}" | base64 --decode ; echo

- 端口转发 Grafana 服务

在主机 shell 中运行以下命令行:

kubectl port-forward -n prometheus svc/$(kubectl -n prometheus get svc | grep grafana | cut -d ' ' -f 1) 8080:80 &

- 登录 Grafana 用户界面

访问 Grafana UI 登录屏幕的方式与之前访问 Prometheus UI 的方式相同。 如果使用 Cloud9,请选择 Preview->Preview Running Application,然后在新窗口中弹出。 如果使用本地主机或带有远程桌面访问 URL 的 EC2 实例 http://localhost:8080。 使用用户名 admin 和您之前检索到的密码登录。

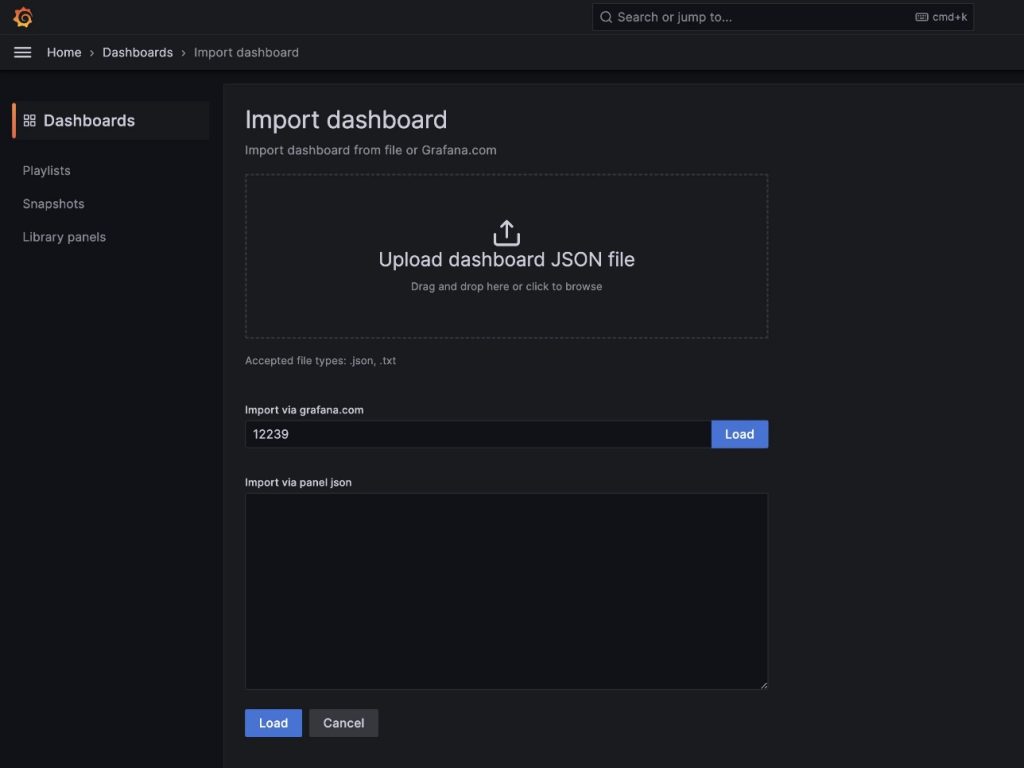

- 在导航窗格中,选择 仪表板

- 全新 和 进口

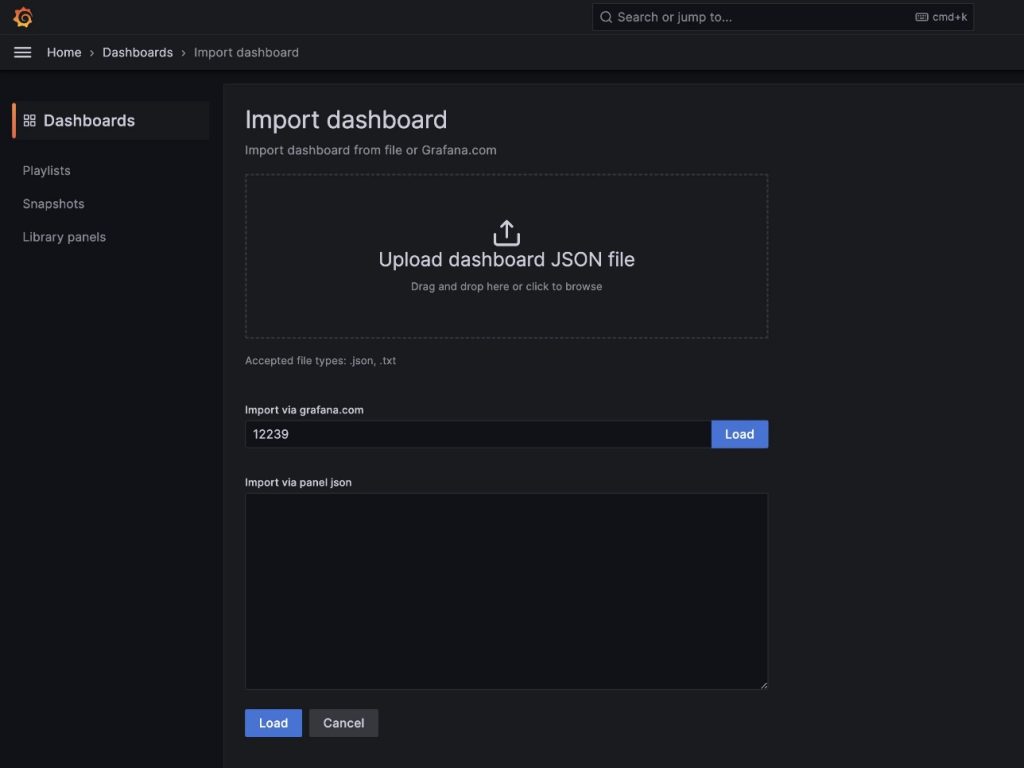

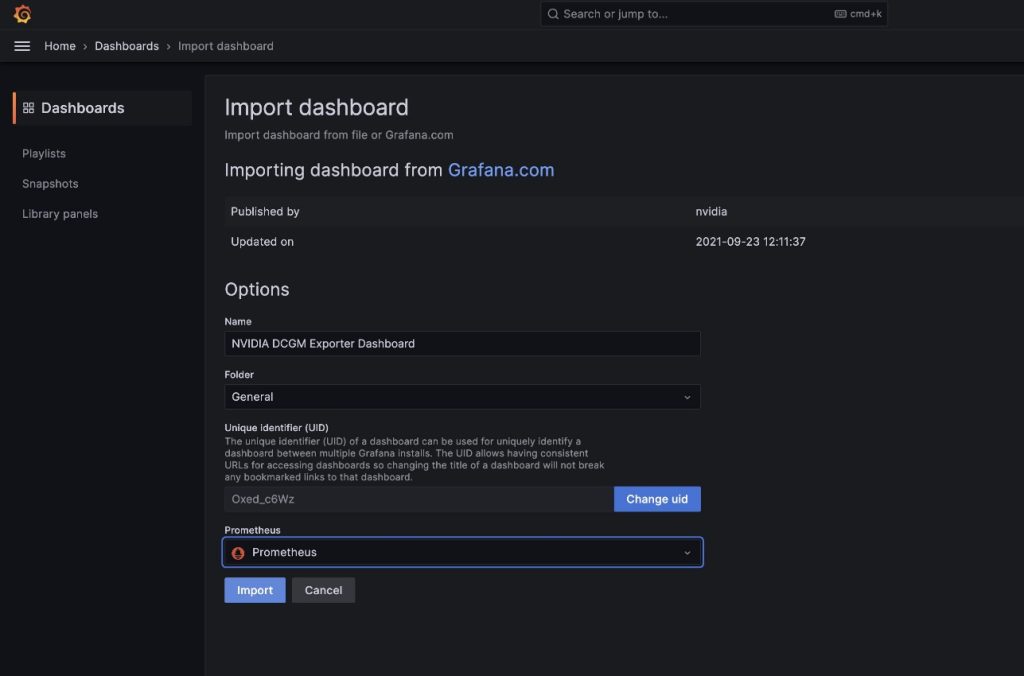

我们将导入默认的 DCGM Grafana 仪表板,如 NVIDIA DCGM 导出器仪表板.

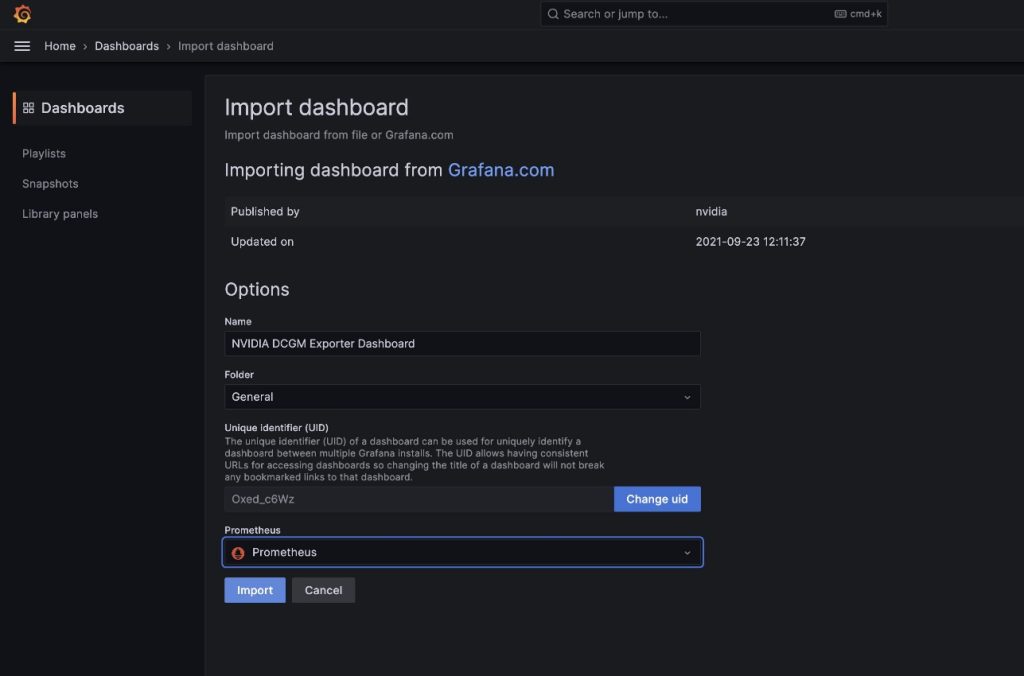

- 在该领域

import via grafana.com,输入 12239 并选择 加载

- 普罗米修斯 作为数据源

- 进口

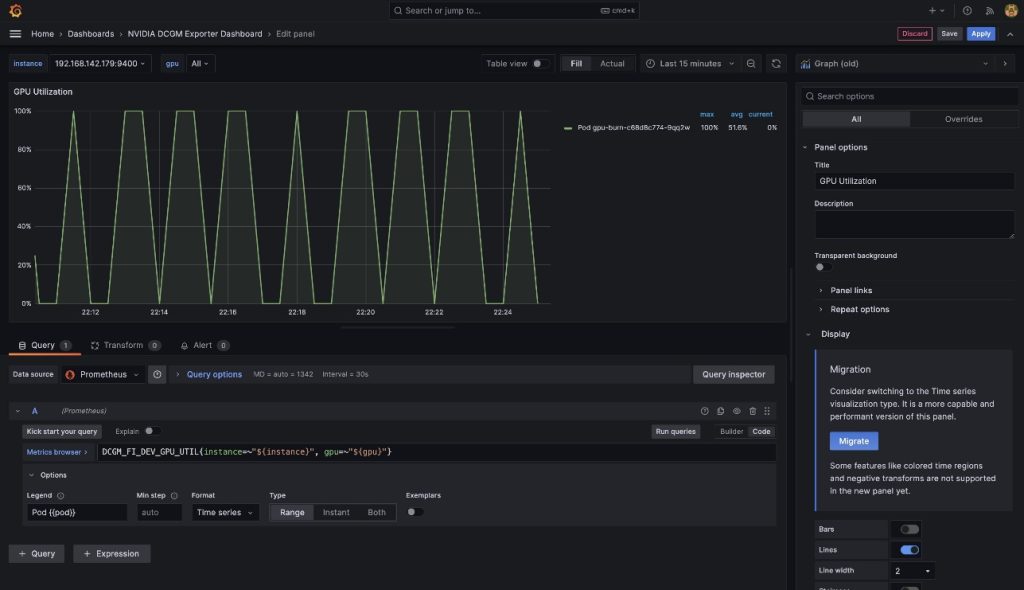

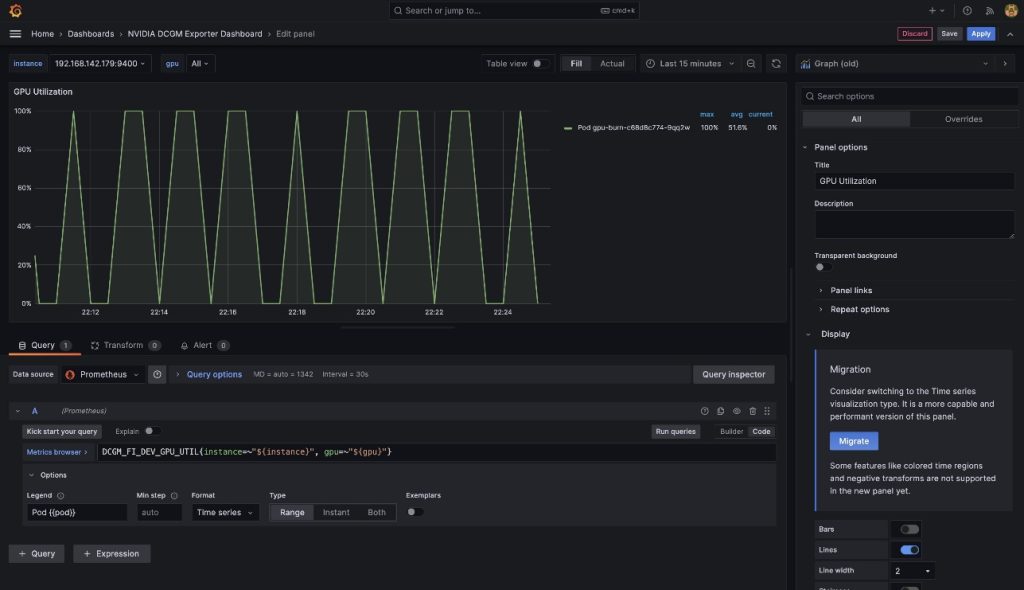

您将看到类似于以下屏幕截图中的仪表板。

为了证明这些指标是基于 pod 的,我们将修改 GPU利用率 此仪表板中的窗格。

- 选择窗格和选项菜单(三个点)

- 展开 附加选项 部分并编辑 传说 部分

- 将那里的值替换为

Pod {{pod}},然后选择 保存

图例现在显示 gpu-burn 与显示的 GPU 利用率关联的 Pod 名称。

- 停止端口转发 Grafana UI 服务

在主机 shell 中运行以下命令:

kill -9 $(ps -aef | grep port-forward | grep -v grep | grep prometheus | awk '{print $2}')

在这篇文章中,我们演示了如何使用部署到 EKS 集群的开源 Prometheus 和 Grafana。 如果需要,此部署可以替换为 亚马逊普罗米修斯托管服务 和 亚马逊托管 Grafana.

清理

要清理您创建的资源,请从以下位置运行以下脚本 aws-do-eks 容器外壳:

结论

在这篇文章中,我们利用 NVIDIA DCGM Exporter 收集 GPU 指标,并使用 CloudWatch 或 Prometheus 和 Grafana 将其可视化。 我们邀请您使用此处演示的架构,在您自己的 AWS 环境中通过 NVIDIA DCGM 启用 GPU 利用率监控。

额外的资源

关于作者

阿姆拉格布 是 AWS EC2 加速计算的前首席解决方案架构师。 他致力于帮助客户大规模运行计算工作负载。 在业余时间,他喜欢旅行并寻找将技术融入日常生活的新方法。

阿姆拉格布 是 AWS EC2 加速计算的前首席解决方案架构师。 他致力于帮助客户大规模运行计算工作负载。 在业余时间,他喜欢旅行并寻找将技术融入日常生活的新方法。

亚历克斯·扬库尔斯基 是 AWS 自我管理机器学习的首席解决方案架构师。 他是一名全栈软件和基础设施工程师,喜欢深入实践工作。 在他的职位上,他专注于帮助客户在容器驱动的 AWS 服务上实现 ML 和 AI 工作负载的容器化和编排。 他也是开源项目的作者 做框架 还有一位热爱应用容器技术来加快创新步伐,同时解决世界上最大挑战的 Docker 队长。 在过去的 10 年里,Alex 致力于人工智能和机器学习的民主化、应对气候变化、让旅行更安全、医疗保健更好、能源更智能。

亚历克斯·扬库尔斯基 是 AWS 自我管理机器学习的首席解决方案架构师。 他是一名全栈软件和基础设施工程师,喜欢深入实践工作。 在他的职位上,他专注于帮助客户在容器驱动的 AWS 服务上实现 ML 和 AI 工作负载的容器化和编排。 他也是开源项目的作者 做框架 还有一位热爱应用容器技术来加快创新步伐,同时解决世界上最大挑战的 Docker 队长。 在过去的 10 年里,Alex 致力于人工智能和机器学习的民主化、应对气候变化、让旅行更安全、医疗保健更好、能源更智能。

渡边庆太 是 Amazon Web Services 框架 ML 解决方案的高级解决方案架构师,他帮助开发业界最好的基于云的自我管理机器学习解决方案。 他的背景是机器学习研究和开发。 在加入 AWS 之前,Keita 从事电子商务行业。 凯塔拥有博士学位。 东京大学理学博士。

渡边庆太 是 Amazon Web Services 框架 ML 解决方案的高级解决方案架构师,他帮助开发业界最好的基于云的自我管理机器学习解决方案。 他的背景是机器学习研究和开发。 在加入 AWS 之前,Keita 从事电子商务行业。 凯塔拥有博士学位。 东京大学理学博士。

- SEO 支持的内容和 PR 分发。 今天得到放大。

- PlatoData.Network 垂直生成人工智能。 赋予自己力量。 访问这里。

- 柏拉图爱流。 Web3 智能。 知识放大。 访问这里。

- 柏拉图ESG。 汽车/电动汽车, 碳, 清洁科技, 能源, 环境, 太阳能, 废物管理。 访问这里。

- 柏拉图健康。 生物技术和临床试验情报。 访问这里。

- 图表Prime。 使用 ChartPrime 提升您的交易游戏。 访问这里。

- 块偏移量。 现代化环境抵消所有权。 访问这里。

- Sumber: https://aws.amazon.com/blogs/machine-learning/enable-pod-based-gpu-metrics-in-amazon-cloudwatch/

选项卡右上角的图标会在新窗口中弹出。

选项卡右上角的图标会在新窗口中弹出。

阿姆拉格布 是 AWS EC2 加速计算的前首席解决方案架构师。 他致力于帮助客户大规模运行计算工作负载。 在业余时间,他喜欢旅行并寻找将技术融入日常生活的新方法。

阿姆拉格布 是 AWS EC2 加速计算的前首席解决方案架构师。 他致力于帮助客户大规模运行计算工作负载。 在业余时间,他喜欢旅行并寻找将技术融入日常生活的新方法。 亚历克斯·扬库尔斯基 是 AWS 自我管理机器学习的首席解决方案架构师。 他是一名全栈软件和基础设施工程师,喜欢深入实践工作。 在他的职位上,他专注于帮助客户在容器驱动的 AWS 服务上实现 ML 和 AI 工作负载的容器化和编排。 他也是开源项目的作者 做框架 还有一位热爱应用容器技术来加快创新步伐,同时解决世界上最大挑战的 Docker 队长。 在过去的 10 年里,Alex 致力于人工智能和机器学习的民主化、应对气候变化、让旅行更安全、医疗保健更好、能源更智能。

亚历克斯·扬库尔斯基 是 AWS 自我管理机器学习的首席解决方案架构师。 他是一名全栈软件和基础设施工程师,喜欢深入实践工作。 在他的职位上,他专注于帮助客户在容器驱动的 AWS 服务上实现 ML 和 AI 工作负载的容器化和编排。 他也是开源项目的作者 做框架 还有一位热爱应用容器技术来加快创新步伐,同时解决世界上最大挑战的 Docker 队长。 在过去的 10 年里,Alex 致力于人工智能和机器学习的民主化、应对气候变化、让旅行更安全、医疗保健更好、能源更智能。 渡边庆太 是 Amazon Web Services 框架 ML 解决方案的高级解决方案架构师,他帮助开发业界最好的基于云的自我管理机器学习解决方案。 他的背景是机器学习研究和开发。 在加入 AWS 之前,Keita 从事电子商务行业。 凯塔拥有博士学位。 东京大学理学博士。

渡边庆太 是 Amazon Web Services 框架 ML 解决方案的高级解决方案架构师,他帮助开发业界最好的基于云的自我管理机器学习解决方案。 他的背景是机器学习研究和开发。 在加入 AWS 之前,Keita 从事电子商务行业。 凯塔拥有博士学位。 东京大学理学博士。