Industrial applications of machine learning are commonly composed of various items that have differing data modalities or feature distributions. Heterogeneous graphs (HGs) offer a unified view of these multimodal data systems by defining multiple types of nodes (for each data type) and edges (for the relation between data items). For instance, e-commerce networks might have [صارف, مصنوعات, کا جائزہ لینے کے] nodes or video platforms might have [چینل, صارف, ویڈیو, تبصرہ] nodes. Heterogeneous graph neural networks (HGNNs) learn node embeddings summarizing each node’s relationships into a vector. However, in real world HGs, there is often a label imbalance issue between different node types. This means that label-scarce node types cannot exploit HGNNs, which hampers the broader applicability of HGNNs.

"میںZero-shot Transfer Learning within a Heterogeneous Graph via Knowledge Transfer Networks"، پر پیش کیا نیور آئی پی ایس 2022, we propose a model called a Knowledge Transfer Network (KTN), which transfers knowledge from label-abundant node types to zero-labeled node types using the rich relational information given in a HG. We describe how we pre-train a HGNN model without the need for fine-tuning. KTNs outperform state-of-the-art transfer learning baselines by up to 140% on zero-shot learning tasks, and can be used to improve many existing HGNN models on these tasks by 24% (or more).

|

| KTNs transform labels from one type of information (چوکوں) through a graph to another type (ستاروں). |

What is a heterogeneous graph?

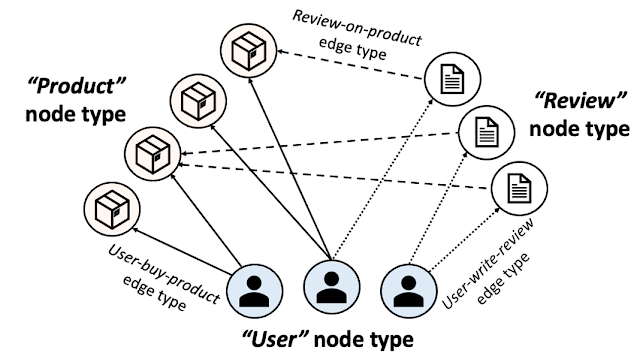

A HG is composed of multiple node and edge types. The figure below shows an e-commerce network presented as a HG. In e-commerce, “users” purchase “products” and write “reviews”. A HG presents this ecosystem using three node types [صارف, مصنوعات, کا جائزہ لینے کے] and three edge types [user-buy-product, user-write-review, review-on-product]. Individual products, users, and reviews are then presented as nodes and their relationships as edges in the HG with the corresponding node and edge types.

|

| E-commerce heterogeneous graph. |

In addition to all connectivity information, HGs are commonly given with input node attributes that summarize each node’s information. Input node attributes could have different modalities across different node types. For instance, images of products could be given as input node attributes for the product nodes, while text can be given as input attributes to review nodes. Node labels (e.g., the category of each product or the category that most interests each user) are what we want to predict on each node.

HGNNs and label scarcity issues

HGNNs compute node embeddings that summarize each node’s local structures (including the node and its neighbor’s information). These node embeddings are utilized by a classifier to predict each node’s label. To train a HGNN model and a classifier to predict labels for a specific node type, we require a good amount of labels for the type.

A common issue in industrial applications of deep learning is label scarcity, and with their diverse node types, HGNNs are even more likely to face this challenge. For instance, publicly available content node types (e.g., product nodes) are abundantly labeled, whereas labels for user or account nodes may not be available due to privacy restrictions. This means that in most standard training settings, HGNN models can only learn to make good inferences for a few label-abundant node types and can usually not make any inferences for any remaining node types (given the absence of any labels for them).

Transfer learning on heterogeneous graphs

Zero-shot transfer learning is a technique used to improve the performance of a model on a ہدف ڈومین with no labels by using the knowledge learned by the model from another related ذرائع domain with adequately labeled data. To apply transfer learning to solve this label scarcity issue for certain node types in HGs, the target domain would be the zero-labeled node types. Then what would be the source domain? پچھلا کام commonly sets the source domain as the same type of nodes located in a different HG, assuming those nodes are abundantly labeled. This graph-to-graph transfer learning approach pre-trains a HGNN model on the external HG and then runs the model on the original (label-scarce) HG.

However, these approaches are not applicable in many real-world scenarios for three reasons. First, any external HG that could be used in a graph-to-graph transfer learning setting would almost surely be ملکیت, thus, likely unavailable. Second, even if practitioners could obtain access to an external HG, it is unlikely the distribution of that source HG would match their target HG well enough to apply transfer learning. Finally, node types suffering from label scarcity are likely to suffer the same issue on other HGs (e.g., privacy issues on user nodes).

Our approach: Transfer learning between node types within a heterogeneous graph

Here, we shed light on a more practical source domain, other node types with abundant labels located on the same HG. Instead of using extra HGs, we transfer knowledge within a single HG (assumed to be fully owned by the practitioners) across different types of nodes. More specifically, we pre-train a HGNN model and a classifier on a label-abundant (source) node type, then reuse the models on the zero-labeled (target) node types located in the same HG without additional fine-tuning. The one requirement is that the source and target node types share the same label set (e.g., in the e-commerce HG, product nodes have a label set describing product categories, and user nodes share the same label set describing their favorite shopping categories).

Why is it challenging?

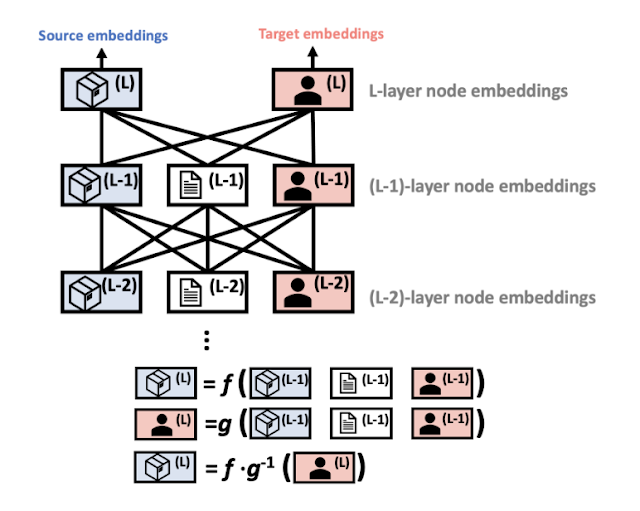

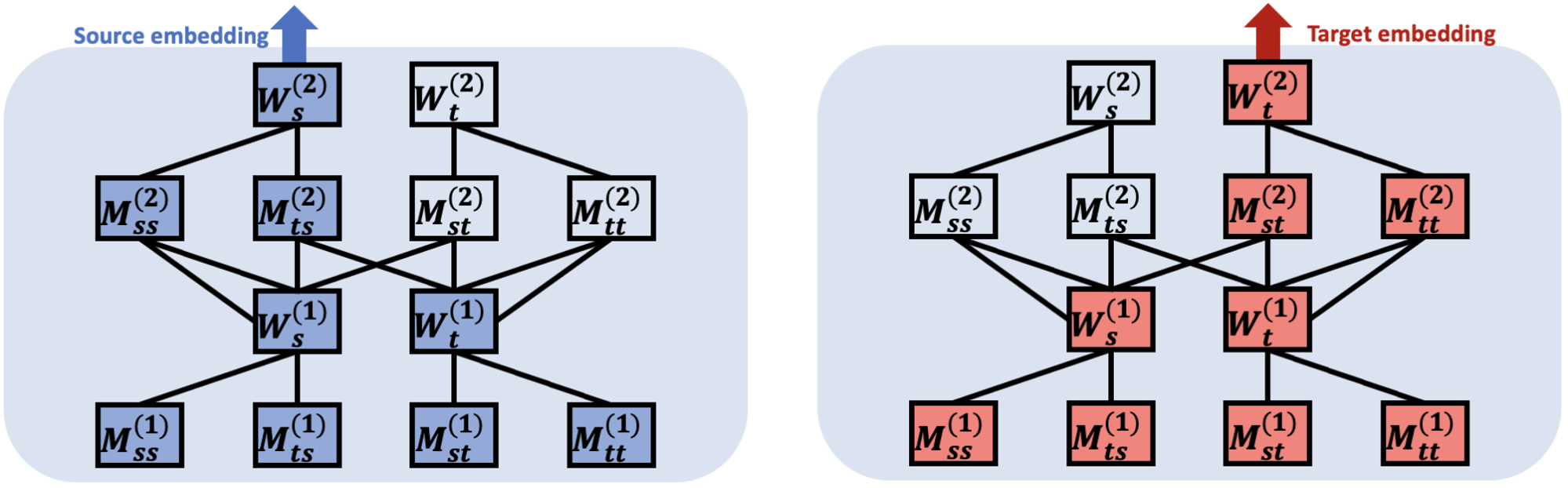

Unfortunately, we cannot directly reuse the pre-trained HGNN and classifier on the target node type. One crucial characteristic of HGNN architectures is that they are composed of modules specialized to each node type to fully learn the multiplicity of HGs. HGNNs use distinct sets of modules to compute embeddings for each node type. In the figure below, blue- and red-colored modules are used to compute node embeddings for the source and target node types, respectively.

|

| HGNNs are composed of modules specialized to each node type and use distinct sets of modules to compute embeddings of different node types. More details can be found in the کاغذ. |

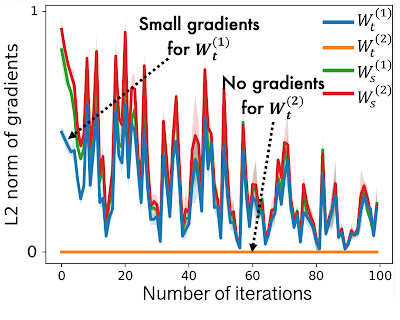

While pre-training HGNNs on the source node type, source-specific modules in the HGNNs are well trained, however target-specific modules are under-trained as they have only a small amount of gradients flowing into them. This is shown below, where we see that the L2 معمول of gradients for target node types (i.e., Mtt) are much lower than for source types (i.e., Mss). In this case a HGNN model outputs poor node embeddings for the target node type, which results in poor task performance.

|

|

| In HGNNs, target type-specific modules receive zero or only a small amount of gradients during pre-training on the source node type, leading to poor performance on the target node type. |

KTN: Trainable cross-type transfer learning for HGNNs

Our work focuses on transforming the (poor) target node embeddings computed by a pre-trained HGNN model to follow the distribution of the source node embeddings. Then the classifier, pre-trained on the source node type, can be reused for the ہدف node type. How can we map the target node embeddings to the source domain? To answer this question, we investigate how HGNNs compute node embeddings to learn the relationship between source and target distributions.

HGNNs aggregate connected node embeddings to augment a target node’s embeddings in each layer. In other words, the node embeddings for both source and target node types are updated using the same input — the previous layer’s node embeddings of any connected node types. This means that they can be represented by each other. We prove this relationship theoretically and find there is a mapping matrix (defined by HGNN parameters) from the target domain to the source domain (more details in Theorem 1 in the کاغذ). Based on this theorem, we introduce an auxiliary عصبی نیٹ ورک, which we refer to as a نالج ٹرانسفر نیٹ ورک (KTN), that receives the target node embeddings and then transforms them by multiplying them with a (trainable) mapping matrix. We then define a regularizer that is minimized along with the performance loss in the pre-training phase to train the KTN. At test time, we map the target embeddings computed from the pre-trained HGNN to the source domain using the trained KTN for classification.

تجرباتی نتائج

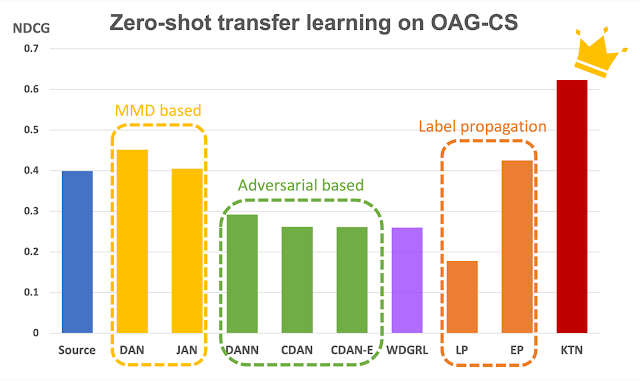

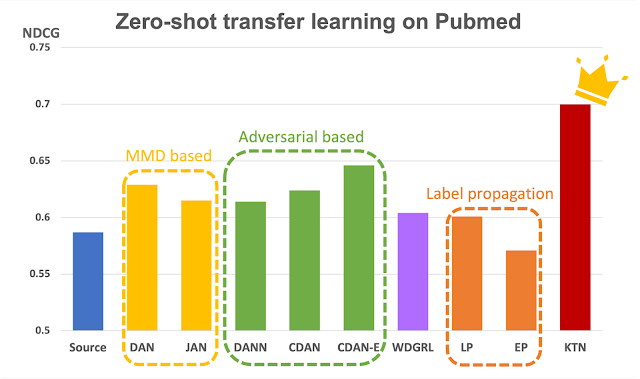

To examine the effectiveness of KTNs, we ran 18 different zero-shot transfer learning tasks on two public heterogeneous graphs, اوپن اکیڈمک گراف اور شائع ہوا. We compare KTN with eight state-of-the-art transfer learning methods (ڈین, JAN, DANN, CDAN, CDAN-E, WDGRL, LP, EP). Shown below, KTN consistently outperforms all baselines on all tasks, beating transfer learning baselines by up to 140% (as measured by عام رعایتی مجموعی فائدہ, a ranking metric).

|

|

| Zero-shot transfer learning on Open Academic Graph (OAG-CS) and Pubmed datasets. The colors represent different categories of transfer learning baselines against which the results are compared. پیلے رنگ: Use statistical properties (e.g., mean, variance) of distributions. سبزاستعمال کریں adversarial models to transfer knowledge. اورنج: Transfer knowledge directly via graph structure using label propagation. |

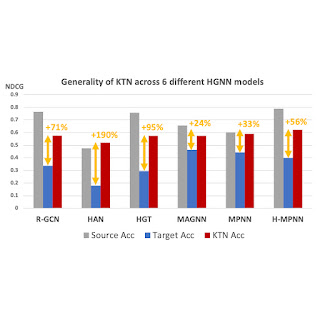

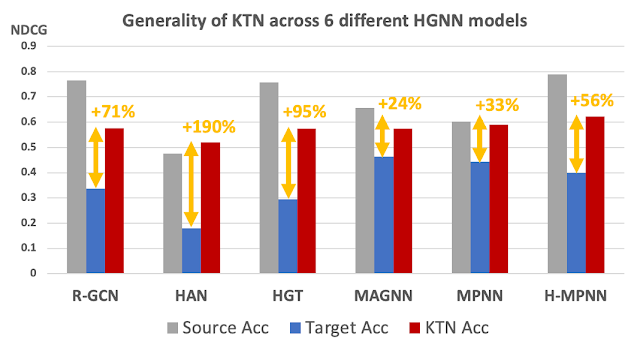

Most importantly, KTN can be applied to almost all HGNN models that have node and edge type-specific parameters and improve their zero-shot performance on target domains. As shown below, KTN improves accuracy on zero-labeled node types across six different HGNN models(R-GCN, ہان, ایچ جی ٹی, MAGNN, ایم پی این این, H-MPNN) by up to 190%.

|

| KTN can be applied to six different HGNN models and improve their zero-shot performance on target domains. |

Takeaways

Various ecosystems in industry can be presented as heterogeneous graphs. HGNNs summarize heterogeneous graph information into effective representations. However, label scarcity issues on certain types of nodes prevent the wider application of HGNNs. In this post, we introduced KTN, the first cross-type transfer learning method designed for HGNNs. With KTN, we can fully exploit the richness of heterogeneous graphs via HGNNs regardless of label scarcity. See the کاغذ مزید تفصیلات کے لئے.

منظوریاں

This paper is joint work with our co-authors John Palowitch (Google Research), Dustin Zelle (Google Research), Ziniu Hu (Intern, Google Research), and Russ Salakhutdinov (CMU). We thank Tom Small for creating the animated figure in this blog post.

#mailpoet_form_1 .mailpoet_form { }

#mailpoet_form_1 فارم { مارجن نیچے: 0؛ }

#mailpoet_form_1 .mailpoet_column_with_background { padding: 0px; }

#mailpoet_form_1 .wp-block-column:first-child, #mailpoet_form_1 .mailpoet_form_column:first-child { padding: 0 20px; }

#mailpoet_form_1 .mailpoet_form_column:not(:first-child) { margin-left: 0; }

#mailpoet_form_1 h2.mailpoet-heading { حاشیہ: 0 0 12px 0; }

#mailpoet_form_1 .mailpoet_paragraph { لائن کی اونچائی: 20px; مارجن نیچے: 20px؛ }

#mailpoet_form_1 .mailpoet_segment_label, #mailpoet_form_1 .mailpoet_text_label, #mailpoet_form_1 .mailpoet_textarea_label, #mailpoet_form_1 .mailpoet_select_label, #mailpoet_form_1 .mailpoet_radio_label, #mailpoet_form_1 .mailpoet_checkbox_label, #mailpoet_form_1 .mailpoet_list_label, #mailpoet_form_1 .mailpoet_date_label { display: block; فونٹ وزن: نارمل؛ }

#mailpoet_form_1 .mailpoet_text, #mailpoet_form_1 .mailpoet_textarea, #mailpoet_form_1 .mailpoet_select, #mailpoet_form_1 .mailpoet_date_month, #mailpoet_form_1 .mailpoet_date_day, #mailpoet_form_1 .mailpoet_date_day, #mailpoet_dateform; }

#mailpoet_form_1 .mailpoet_text, #mailpoet_form_1 .mailpoet_textarea { چوڑائی: 200px; }

#mailpoet_form_1 .mailpoet_checkbox { }

#mailpoet_form_1 .mailpoet_submit { }

#mailpoet_form_1 .mailpoet_divider { }

#mailpoet_form_1 .mailpoet_message { }

#mailpoet_form_1 .mailpoet_form_loading { چوڑائی: 30px; متن سیدھ: مرکز؛ لائن کی اونچائی: نارمل؛ }

#mailpoet_form_1 .mailpoet_form_loading > span { چوڑائی: 5px; اونچائی: 5px؛ پس منظر کا رنگ: #5b5b5b؛ }#mailpoet_form_1{border-radius: 3px;background: #27282e;color: #ffffff;text-align: left;}#mailpoet_form_1 form.mailpoet_form {padding: 0px;}#mailpoet_form_1{width:#mailpoet_form_100{width:#mailpoet_form;} mailpoet_message {حاشیہ: 1; پیڈنگ: 0 0px;}

#mailpoet_form_1 .mailpoet_validate_success {color: #00d084}

#mailpoet_form_1 input.parsley-success {color: #00d084}

#mailpoet_form_1 select.parsley-success {color: #00d084}

#mailpoet_form_1 textarea.parsley-success {color: #00d084}

#mailpoet_form_1 .mailpoet_validate_error {رنگ: #cf2e2e}

#mailpoet_form_1 input.parsley-error {color: #cf2e2e}

#mailpoet_form_1 select.parsley-error {color: #cf2e2e}

#mailpoet_form_1 textarea.textarea.parsley-error {رنگ: #cf2e2e}

#mailpoet_form_1 .parsley-errors-list {color: #cf2e2e}

#mailpoet_form_1 .parsley-ضروری {رنگ: #cf2e2e}

#mailpoet_form_1 .parsley-custom-error-message {color: #cf2e2e}

#mailpoet_form_1 .mailpoet_paragraph.last {margin-bottom: 0} @media (زیادہ سے زیادہ چوڑائی: 500px) {#mailpoet_form_1 {background: #27282e;}} @media (کم سے کم چوڑائی: 500px) {#mailpoet_form_stparagraph_1. آخری بچہ {مارجن نیچے: 0}} @media (زیادہ سے زیادہ چوڑائی: 500px) {#mailpoet_form_1 .mailpoet_form_column:last-child .mailpoet_paragraph:last-child {margin-bottom: 0}}

Teaching old labels new tricks in heterogeneous graphs Republished from Source http://ai.googleblog.com/2023/03/teaching-old-labels-new-tricks-in.html via http://feeds.feedburner.com/blogspot/gJZg

کراؤڈ سورسنگ ہفتہ

<!–

->

<!–

->

- SEO سے چلنے والا مواد اور PR کی تقسیم۔ آج ہی بڑھا دیں۔

- پلیٹو بلاک چین۔ Web3 Metaverse Intelligence. علم میں اضافہ۔ یہاں تک رسائی حاصل کریں۔

- ماخذ: https://blockchainconsultants.io/teaching-old-labels-new-tricks-in-heterogeneous-graphs/?utm_source=rss&utm_medium=rss&utm_campaign=teaching-old-labels-new-tricks-in-heterogeneous-graphs

- 1

- 1999

- 7

- a

- AC

- تعلیمی

- تک رسائی حاصل

- اکاؤنٹ

- درستگی

- ACM

- کے پار

- اس کے علاوہ

- ایڈیشنل

- مناسب

- کے خلاف

- تمام

- رقم

- اور

- ایک اور

- جواب

- قابل اطلاق

- درخواست

- ایپلی کیشنز

- اطلاقی

- کا اطلاق کریں

- نقطہ نظر

- نقطہ نظر

- فرض کیا

- اوصاف

- دستیاب

- پس منظر

- کی بنیاد پر

- نیچے

- کے درمیان

- بلاک

- بلاگ

- وسیع

- برائن

- کہا جاتا ہے

- نہیں کر سکتے ہیں

- کیس

- اقسام

- قسم

- سینٹر

- کچھ

- چیلنج

- چیلنج

- خصوصیت

- درجہ بندی

- رنگ

- کامن

- عام طور پر

- موازنہ

- مقابلے میں

- پر مشتمل

- کمپیوٹنگ

- منسلک

- رابطہ

- مواد

- اسی کے مطابق

- سکتا ہے

- تخلیق

- اہم

- اعداد و شمار

- ڈیٹاسیٹس

- dc

- گہری

- گہری سیکھنے

- کی وضاحت

- وضاحت

- بیان

- تفصیل

- ڈیزائن

- تفصیلات

- مختلف

- مختلف

- براہ راست

- رعایتی

- دکھائیں

- مختلف

- تقسیم

- تقسیم

- متنوع

- ڈومین

- ڈومینز

- کے دوران

- ای کامرس

- ہر ایک

- ماحول

- ماحولیاتی نظام۔

- ایج

- موثر

- تاثیر

- کافی

- بھی

- موجودہ

- دھماکہ

- بیرونی

- اضافی

- چہرہ

- پسندیدہ

- نمایاں کریں

- چند

- اعداد و شمار

- فائنل

- آخر

- مل

- پہلا

- بہہ رہا ہے

- توجہ مرکوز

- پر عمل کریں

- فارم

- ملا

- سے

- مکمل طور پر

- افعال

- GIF

- GitHub کے

- دی

- اچھا

- گوگل

- میلان

- گراف

- گرافکس

- اونچائی

- یہاں

- کس طرح

- تاہم

- HTML

- HTTPS

- تصاویر

- عدم توازن

- کو بہتر بنانے کے

- in

- دیگر میں

- سمیت

- انفرادی

- صنعتی

- صنعت

- معلومات

- ان پٹ

- مثال کے طور پر

- کے بجائے

- مفادات

- متعارف کرانے

- متعارف

- کی تحقیقات

- مسئلہ

- مسائل

- IT

- اشیاء

- جان

- مشترکہ

- علم

- لیبل

- لیبل

- آخری

- پرت

- معروف

- جانیں

- سیکھا ہے

- سیکھنے

- روشنی

- امکان

- مقامی

- واقع ہے

- بند

- مشین

- مشین لرننگ

- بنا

- بہت سے

- نقشہ

- تعریفیں

- مارجن

- میچ

- ریاضیاتی

- میٹرکس

- زیادہ سے زیادہ چوڑائی

- کا مطلب ہے کہ

- طریقہ

- طریقوں

- میٹرک۔

- شاید

- کانوں کی کھدائی

- ماڈل

- ماڈل

- ماڈیولز

- زیادہ

- سب سے زیادہ

- ایک سے زیادہ

- ضرب لگانا

- ضرورت ہے

- نیٹ ورک

- نیٹ ورک

- نئی

- نوڈ

- نوڈس

- عام

- حاصل

- پیش کرتے ہیں

- پرانا

- ایک

- کھول

- اصل

- دیگر

- باہر نکلنا

- Outperforms

- ملکیت

- کاغذ.

- پیرامیٹرز

- کارکردگی

- مرحلہ

- پلیٹ فارم

- پلاٹا

- افلاطون ڈیٹا انٹیلی جنس

- پلیٹو ڈیٹا

- غریب

- پوسٹ

- عملی

- پیشن گوئی

- پیش

- تحفہ

- کی روک تھام

- پچھلا

- کی رازداری

- مصنوعات

- حاصل

- خصوصیات

- تجویز کریں

- ثابت کریں

- عوامی

- عوامی طور پر

- خرید

- سوال

- رینکنگ

- اصلی

- حقیقی دنیا

- وجوہات

- وصول

- موصول

- بے شک

- متعلقہ

- سلسلے

- تعلقات

- تعلقات

- باقی

- کی نمائندگی

- نمائندگی

- کی ضرورت

- ضرورت

- تحقیق

- پابندی

- نتائج کی نمائش

- کا جائزہ لینے کے

- جائزہ

- امیر

- اسی

- کمی

- منظرنامے

- سائنسدان

- دوسری

- مقرر

- سیٹ

- قائم کرنے

- ترتیبات

- سیکنڈ اور

- خریداری

- دکھایا گیا

- شوز

- ایک

- چھ

- چھوٹے

- حل

- ماخذ

- خصوصی

- مخصوص

- خاص طور پر

- معیار

- ریاستی آرٹ

- شماریات

- ساخت

- مبتلا

- مختصر

- یقینا

- سسٹمز

- ہدف

- ٹاسک

- کاموں

- پڑھانا

- ٹیسٹ

- ۔

- ماخذ

- ان

- تین

- کے ذریعے

- وقت

- کرنے کے لئے

- ٹرین

- تربیت یافتہ

- ٹریننگ

- منتقل

- منتقلی

- تبدیل

- تبدیل

- اقسام

- متحد

- اپ ڈیٹ

- استعمال کی شرائط

- رکن کا

- صارفین

- عام طور پر

- استعمال کیا

- مختلف

- کی طرف سے

- ویڈیو

- لنک

- W3

- کیا

- جس

- جبکہ

- وسیع

- چوڑائی

- وکیپیڈیا

- کے اندر

- بغیر

- الفاظ

- کام

- دنیا

- گا

- لکھنا

- سیل

- زیفیرنیٹ

- صفر

- زیرو شاٹ لرننگ